Explore an AI Governance Framework: Practical Steps for Responsible AI

Learn how to implement an ai governance framework with practical risk controls, compliance, and responsible AI practices to protect your organization.

At its core, an AI governance framework is the rulebook for how your organization handles artificial intelligence. It's a formal system of policies, roles, and procedures that oversees how AI is designed, deployed, and managed across the board. Think of it as the essential playbook that ensures your AI efforts are safe, ethical, and actually line up with your company's values.

Why You Need an AI Governance Framework Right Now

Jumping into AI without a plan is a massive gamble. An AI governance framework isn't just more corporate red tape; it's the strategic blueprint that turns a powerful, but risky, technology into a real competitive advantage. Without it, you’re basically flying blind through a minefield of potential regulatory fines, brand-damaging failures, and lost customer trust.

A solid framework built on accountability, transparency, and fairness is the bedrock for any sustainable AI innovation.

This kind of proactive thinking gets you out of a reactive, problem-fixing mode. It lets you intentionally build systems that people—both customers and internal stakeholders—can actually rely on. This is absolutely critical as AI weaves its way deeper into our daily operations, whether it’s for internal tooling or your flagship customer-facing products.

The Global Push for Responsible AI

The drive for structured AI governance isn't just an internal debate; it's happening on a global scale. International bodies are setting standards that are rapidly shaping national laws, and what happens in one region often creates a ripple effect everywhere else.

Back in 2019, for instance, the Organisation for Economic Co-operation and Development (OECD) laid out five core principles that have become the de facto gold standard. These principles, which cover everything from human rights to transparency and accountability, were agreed upon by 42 countries and have directly influenced regulations in major economies like the EU and G20 nations. You can learn more about these foundational global frameworks to see where things are headed.

Simply put, ignoring these emerging standards is no longer a viable option. Companies that don't get ahead of this will face serious compliance headaches and risk being shut out of entire markets.

From Risk Mitigation to Strategic Advantage

Ultimately, strong governance changes the internal conversation from "What could go wrong?" to "How do we get this right from the start?" It builds a culture where teams feel empowered to experiment and innovate because they know the guardrails are in place.

By embedding ethical checks and robust controls directly into your AI development lifecycle, you do more than just dodge penalties. You build better, more reliable products that create real value and strengthen your brand's reputation for years to come.

This is the strategic foundation our AI strategy consulting services help organizations create. We ensure your AI solutions aren't just technically impressive, but also secure, scalable, and ready for whatever comes next. For any business serious about AI, a well-defined framework is the only place to start.

The Building Blocks of an Effective Governance Framework

A solid AI governance framework isn't just a single policy document; it's a living system built on several interlocking pillars. Each one serves a critical function, and together, they ensure your AI initiatives are effective, responsible, and aligned with your core business values. Without this structure, even the most promising AI projects can stumble into unforeseen risks.

Think of it like building a house. You wouldn’t start framing the walls before pouring a solid foundation. In the same way, a strong governance structure needs these foundational elements in place to support everything you build on top of it, from internal tools to major customer-facing applications. Your entire AI strategy depends on it.

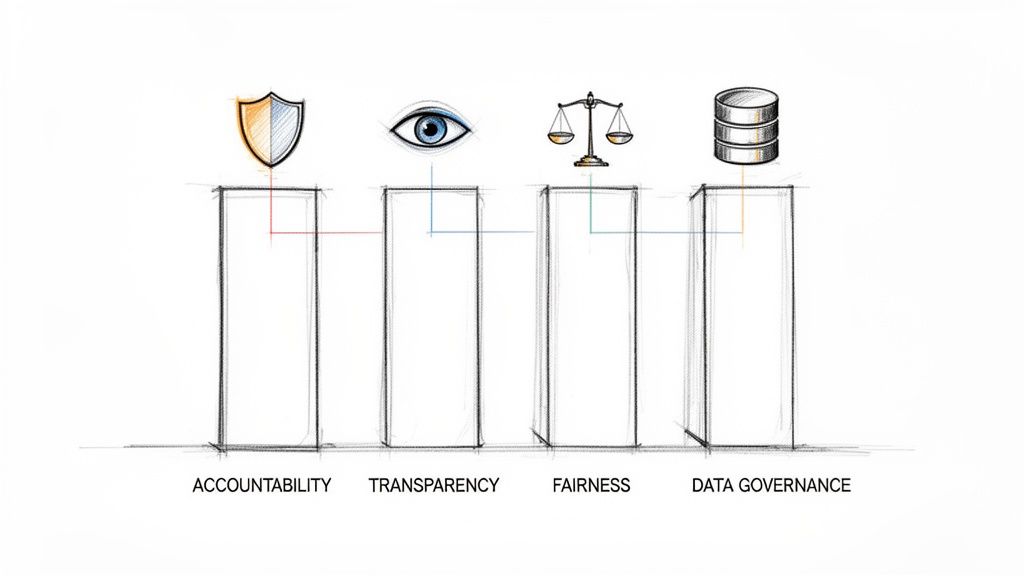

Establishing Clear Ethical Principles and Policies

Your ethical principles are the compass for your entire AI program. These are the high-level statements that define what "responsible AI" means to your organization. They push beyond simple compliance to answer the fundamental question: "What do we stand for?"

These principles should be clear and direct, addressing concepts like:

- Fairness: A commitment to actively find and root out discriminatory bias in your AI systems.

- Transparency: Providing clear, understandable explanations for how your models arrive at their decisions.

- Accountability: Ensuring a human is always ultimately responsible for the outcomes of an AI system.

- Safety: Rigorously testing systems to prevent them from causing harm, whether to users, your brand, or the environment.

But principles on a poster are just words. You have to translate them into actionable policies—this is where the abstract becomes concrete, giving your teams clear guidelines for their day-to-day work.

Defining Roles and Responsibilities

Ambiguity is the enemy of good governance. When everyone is responsible, no one is. That's why clearly defining roles and responsibilities is absolutely essential for creating accountability and making sure your governance processes are followed consistently.

A proven tool for this is the RACI matrix, which assigns a specific role for every task:

- Responsible: The person or team doing the actual work.

- Accountable: The one person who has the final say and is answerable for the outcome.

- Consulted: Experts or stakeholders who provide input and advice.

- Informed: People who just need to be kept in the loop on progress.

For instance, when auditing a new AI model for bias, a data scientist might be Responsible, the Head of AI Ethics Accountable, the legal team Consulted, and a business unit leader Informed. This simple framework cuts through the confusion and keeps decisions moving.

Comprehensive Risk Management and Compliance

Managing risk is at the heart of any governance framework. This goes way beyond just cybersecurity; it covers the unique ethical, operational, and reputational risks that come with AI. Your process needs to include identifying potential harms, assessing their likelihood and impact, and having solid mitigation strategies ready.

A huge piece of this puzzle is navigating the legal landscape. For any governance framework to be effective, legal compliance isn't optional—it's foundational. It's well worth your time to get up to speed by understanding AI law and seeing how emerging regulations will impact your work.

This proactive approach means you won't be blindsided by new regulations or unexpected model behavior. You're building resilience into your AI systems from the ground up.

Data Governance and Model Lifecycle Management

An AI model is only as good as the data it’s fed. That makes strong data governance a non-negotiable part of the equation. This practice covers the entire data lifecycle—from collection and storage to usage and disposal—to ensure your data is high-quality, secure, and handled ethically.

Just as critical is model lifecycle management. This means establishing clear standards and checkpoints for every single stage of a model's journey:

- Development: Building models according to established ethical principles and technical best practices.

- Validation: Kicking the tires with rigorous testing for performance, bias, and robustness before it goes live.

- Deployment: Rolling the model out into a production environment with the right monitoring in place.

- Monitoring: Continuously tracking performance to catch model drift, decay, or any unintended consequences.

- Retirement: Having a clear off-boarding plan to decommission models that are no longer effective or relevant.

By managing the full lifecycle, you maintain real control over your AI assets and ensure they keep delivering value safely and effectively over time.

To bring this all together, here’s a look at how these core components function within a governance framework.

Table: Key Components of an AI Governance Framework

This table summarizes the essential pillars of an effective AI governance framework and their primary functions within an organization.

| Component | Primary Function | Key Activities |

|---|---|---|

| Ethical Principles & Policies | Establish the organization's "north star" for responsible AI. | Define principles (fairness, transparency); create actionable policies; train employees. |

| Roles & Responsibilities | Ensure clear ownership and accountability for AI initiatives. | Develop a RACI matrix; form an AI governance committee; define decision-making workflows. |

| Risk & Compliance | Proactively identify, assess, and mitigate AI-related risks. | Conduct risk assessments; monitor regulatory changes; map controls to legal requirements. |

| Data Governance | Ensure the quality, integrity, and ethical handling of data. | Establish data quality standards; implement data privacy controls; manage data lineage. |

| Model Lifecycle Management | Maintain control and oversight of AI models from creation to retirement. | Set up model validation protocols; implement continuous monitoring; create a model inventory. |

| Monitoring & Reporting | Provide visibility into the performance and compliance of AI systems. | Define key performance indicators (KPIs); develop governance dashboards; schedule regular audits. |

Having a clear view of these components and their activities is the first step in turning governance from a theoretical concept into a practical, operational reality.

How to Run an AI Risk Assessment That Actually Works

Talking about AI governance is one thing, but putting it into practice is where the real work begins. An AI risk assessment isn't some theoretical exercise you do in a conference room; it's a hands-on process to find, measure, and prioritize the real-world threats your AI systems could create. This is the bedrock of our AI requirements analysis and the critical input for a Custom AI Strategy report that turns these findings into a clear plan.

It all starts with a simple, foundational step: map out every single AI system your organization is using or even thinking about building. You simply can't govern what you don't know you have.

First, Build Your AI System Inventory

Before you can even think about risks, you need a complete picture of your AI landscape. This inventory can't be a one-and-done spreadsheet; it has to be a living document that tracks every model, app, and third-party tool that has AI under the hood.

For every system you log, make sure you capture the essentials:

- What it does: What business problem is this AI supposed to solve? Is it customer support, flagging fraud, or screening job candidates?

- What it eats: What data is it trained on and using day-to-day? Is it sensitive customer PII, or is it public, anonymized data?

- Who owns it: Who's the business owner accountable for its results, and which tech team keeps it running?

- Who it affects: Who are the people impacted by its decisions? Think customers, employees, or even business partners.

I can almost guarantee this first step will turn up some surprises. You'll likely find "shadow AI"—tools and platforms that individual teams have started using on their own, completely off the radar. Getting a full inventory is the only way to make sure your governance efforts cover all your bases.

Identify and Group Your AI-Specific Risks

Once your inventory is in good shape, it's time to start sniffing out the potential risks for each system. AI brings a whole new flavor of risk that goes way beyond traditional IT security.

I find it helpful to group them into a few key categories:

-

Ethical and Reputational Risks: This is where the big, scary stuff like algorithmic bias lives. A hiring tool that consistently filters out qualified candidates from a certain demographic is a classic example. So is a medical diagnostic model that’s less accurate for a specific ethnicity. These are the kinds of failures that make headlines and can torpedo your brand's reputation.

-

Operational Risks: These are the more mundane, day-to-day headaches. Imagine a customer service chatbot "hallucinating" and confidently telling customers the wrong refund policy. Our AI Automation as a Service offerings are designed with safeguards to minimize such issues.

-

Security and Privacy Risks: AI models are a new attack surface. Bad actors can use techniques like data poisoning to corrupt your model or model inversion to try and extract the sensitive data it was trained on. If you're building custom healthcare software development, for instance, a data breach isn't just a mistake—it's a massive compliance failure.

-

Regulatory and Compliance Risks: Governments are waking up to AI, and new rules are popping up everywhere. Using a "black box" model whose decisions you can't explain could put you in direct violation of regulations that demand transparency.

This last point is becoming a huge issue, especially in tightly regulated fields like finance. The latest reports from regulators are full of red flags: AI agents running without any human in the loop, decisions so opaque they can't be audited, and models drifting so far from their original purpose they become unpredictable. When you add hallucinations and bias to the mix, you can see how the gap between what companies want to do with AI and what they can safely do is getting wider. This research on emerging fintech challenges breaks down why this is such a pressing problem.

Figure Out What to Tackle First: Likelihood vs. Impact

Okay, so now you have a long list of potential problems for every AI system. You can't fix everything at once, so you have to prioritize. A simple but incredibly effective way to do this is to score each risk on two simple scales: likelihood and impact.

I like using a basic 1-to-5 scale for both:

- Likelihood: How likely is this to actually happen? (1 = Almost Impossible, 5 = It's a Matter of Time)

- Impact: If it does happen, how bad will it be? (1 = Barely a Blip, 5 = Catastrophic)

Multiply those two numbers together, and you get a risk priority score.

Let's walk through an example: The AI Hiring Tool

- The Risk: The tool is biased against applicants from non-traditional universities.

- Likelihood: 4 (High. This is a common outcome if the training data was pulled from existing employee profiles, who mostly attended traditional schools).

- Impact: 5 (Catastrophic. This could easily lead to discrimination lawsuits, hefty regulatory fines, and a PR nightmare).

- Risk Score: 4 x 5 = 20

This simple math instantly clarifies your priorities. A risk that scores a 20 is an all-hands-on-deck, fix-it-now problem. A risk that scores a 2 can probably wait. This isn't about bureaucracy; it's about using a clear, data-driven method to focus your time and money where they will do the most good. This kind of systematic thinking is a non-negotiable part of any solid AI Product Development Workflow.

Turning Your AI Strategy Into Rules of the Road

A risk assessment shows you where the potholes are. Your policies are the guardrails and road signs that keep your teams on the right path. This is the moment where high-level strategy gets real—where we translate our AI governance framework into clear, enforceable rules that actually fit into how people work every day. These can't be dusty binders on a shelf; they have to be living documents that guide day-to-day decisions.

This shift from planning to doing is a make-or-break point in the AI Product Development Workflow. It’s where you set up the quality gates to ensure all the AI tools for business you build or buy are up to snuff.

Start with a Core AI Ethics Policy

First things first, you need a foundational AI ethics policy. Think of this as your organization's constitution for artificial intelligence. It spells out the core principles you’ve committed to—fairness, accountability, transparency—in plain English. It’s less about the technical nitty-gritty and more about setting a clear, unambiguous tone from the top.

This policy has to be understood by everyone, from the data scientists in the weeds to the marketing team crafting messaging. It needs to clearly state what your company stands for and, just as importantly, the ethical lines you absolutely will not cross.

For example, you might include a statement like this: "We will not use any AI system to make automated decisions about an individual's access to essential services (like loans or healthcare) without a clear and accessible process for human review and appeal." That simple sentence creates a powerful boundary for every project that follows.

Create Specific Playbooks for Data and Models

Once you have that ethical foundation, you can start drilling down into more specific policies for different operational areas. These are the detailed playbooks your technical teams will rely on daily. Two of the most critical areas you’ll need to cover are how you handle data and how you validate models.

You'll want to develop policies for a few key areas:

- Data Privacy & Usage Policy: This is your rulebook for how data is collected, stored, used for training, and ultimately, destroyed. It absolutely must line up with regulations like GDPR or HIPAA, especially if you're working in sensitive areas like our Healthcare AI Services.

- Model Validation & Testing Protocol: This policy defines the non-negotiable standards a model must meet before it gets anywhere near production. It should mandate tough testing for bias, accuracy, robustness, and security holes.

- Acceptable Use Policy for Third-Party AI: Your teams are probably already using external AI tools. You need rules that govern how they choose and use them, ensuring any third-party service meets your own company's ethical and security standards.

An Example of a Data Handling Policy Outline

To make this less abstract, here’s a simplified outline you can adapt for your own data handling policy. Taking a structured approach like this ensures you cover all your bases and provides much-needed clarity for anyone working with sensitive information.

| Section | Key Questions to Answer |

|---|---|

| 1. Data Sourcing | Where did we get this data? Do we have explicit consent to use it for AI training? Does the data actually represent the people it will impact? |

| 2. Data Labeling | Who is labeling the data? What's our quality control process to make sure the labels are accurate and don't introduce bias? |

| 3. Data Security | How is the data encrypted, both when it's stored and when it's being moved? Who has access, and how are we logging and auditing that access? |

| 4. Data Minimization | Are we only collecting and using the data that is absolutely essential for the model to work? Could we get the same result with less sensitive data? |

| 5. Data Retention | How long are we keeping this data? What’s the trigger and the exact process for securely deleting it when it's no longer needed? |

Policies Are Useless Without Training

Look, writing policies is only half the job. Getting people to actually understand and follow them is what makes the difference. A solid AI governance framework needs a serious training and communication plan to back it up. You have to translate these documents into practical, role-specific training.

Your data scientists need to know the subtleties of bias detection, while your product managers need to know how to run an ethical review for a new AI feature. As we explored in our AI adoption guide, educating the entire company is the only way to build a real culture of responsibility. When you invest in training, you give every single employee the tools to be a steward of your AI governance framework. That's how you turn abstract principles into everyday practice and ensure governance isn't a roadblock, but an enabler of responsible innovation.

Your Phased Implementation Roadmap

An AI governance framework on paper is just that—a plan. The real magic happens when you bring it to life, and that's a journey, not a sprint. Trying to do everything at once is a recipe for chaos. A phased roadmap is the only way I've seen this work successfully, as it helps you manage complexity, get people on board, and build momentum without completely overwhelming the organization.

This structured approach lets you learn and adapt as you go. It ensures your governance model becomes a practical, well-oiled machine, not just a theoretical exercise collecting dust on a shelf.

Phase 1: The Foundation

First things first, you need to lay the groundwork. Before you can build a house, you need a solid foundation, and the same principle applies here. This initial stage is all about getting everyone aligned, understanding your current situation, and establishing crystal-clear ownership.

Here’s what you should focus on during this foundational period:

- Assemble a Governance Committee: This isn't just an IT or legal thing. Pull together a cross-functional team with leaders from legal, IT, data science, and the key business units that will actually use the AI. This group will be your steering committee.

- Run Initial Risk Assessments: Using the methods we've already covered, do a high-level risk assessment of your current and planned AI systems. This gives you a clear, immediate picture of where your biggest fires are.

- Define Core Principles and Policies: Draft your high-level AI ethics policy and the first set of critical guidelines. Start with the essentials, like your data handling protocols.

- Pick a Pilot Project: This is absolutely critical. Choose one or two well-defined, medium-risk AI projects to act as a testbed for your new governance controls. This is where you'll iron out all the kinks before a company-wide rollout.

Starting with a focused pilot project gives you a safe space to test your policies, train a small group, and get invaluable feedback. It’s an iterative process that helps ensure responsible and scalable AI implementation.

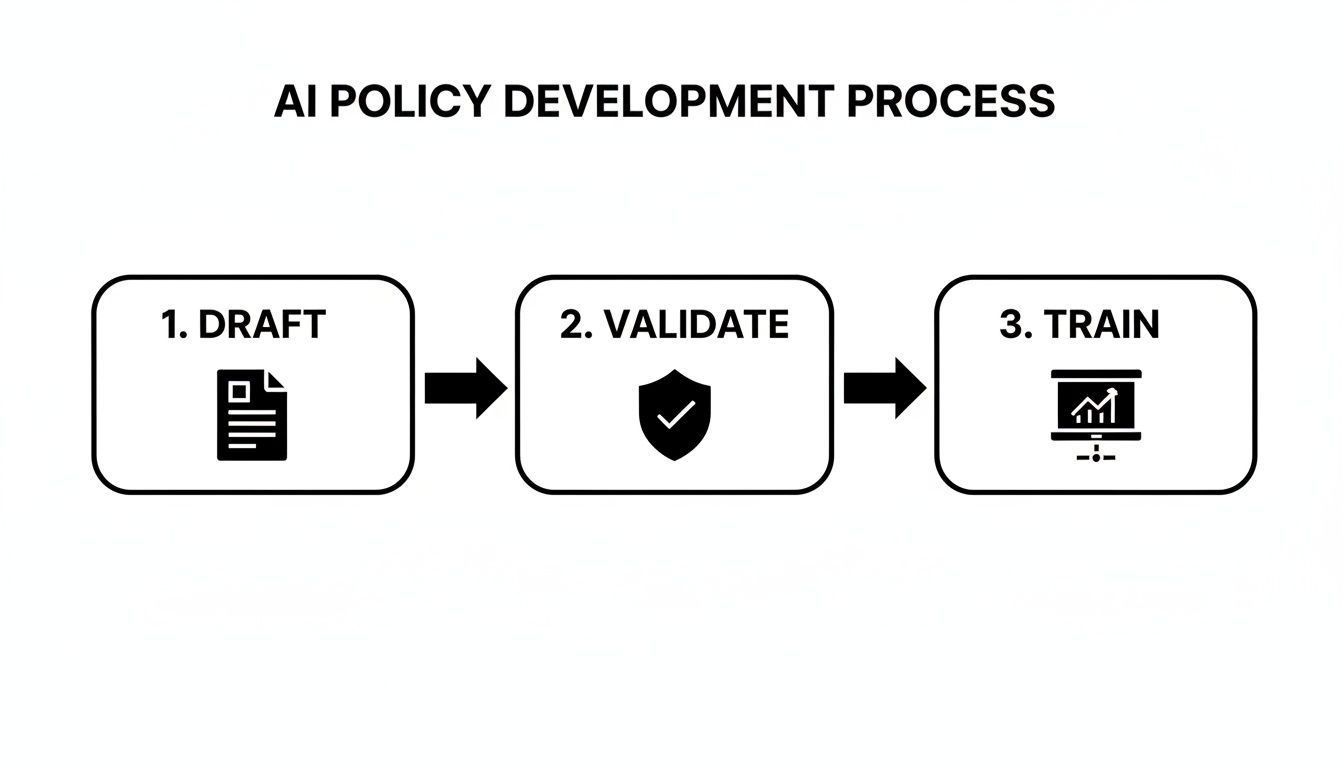

This simple workflow is a great way to think about operationalizing any single policy within this phase.

This Draft, Validate, and Train cycle makes sure policies aren't just written and forgotten. They get vetted by the right people, are genuinely understood, and are actually integrated into how teams work.

Phase 2: Integration and Embedding

With your foundation firmly in place, it’s time to embed governance directly into your daily operations and development cycles. The goal here is to make responsible AI the default setting, not an afterthought that people resent.

This phase is all about taking the wins from your pilot and scaling them across the organization. You'll want to integrate governance controls right into your existing AI product development workflow. In practice, this means building automated checks, review gates, and documentation requirements into the tools your teams are already using, like Jira or Azure DevOps.

Widespread training is a non-negotiable part of this stage. You have to move beyond the governance committee and educate everyone who touches AI—from developers and data scientists to product managers and marketers—on the new policies and what’s expected of them.

Don't underestimate the real-world consequences of a messy, unstructured rollout. A 2023 World Economic Forum analysis pointed out how fragmented AI governance creates a trust deficit. When Italy temporarily banned ChatGPT, it caused exposed firms to underperform by around 9%. That’s a direct hit to the bottom line, all because of inconsistent rules and a poor implementation plan.

Phase 3: Optimization and Continuous Improvement

AI governance is not a "set it and forget it" project. The technology, regulations, and risks are always shifting, which means your framework has to be a living, breathing system. This final phase is all about continuous improvement and adaptation.

Your focus now shifts to keeping the framework sharp and relevant:

- Regular Audits and Reviews: Schedule periodic audits of your AI systems against your policies. This is how you verify that the controls are actually working and spot areas that need tightening up.

- Monitoring and Reporting: Set up dashboards and reporting mechanisms to track your key governance metrics. This gives leadership a clear, at-a-glance view of the health and effectiveness of your entire AI program.

- Adapting to Change: Have a clear process for updating your framework when new technologies emerge, new regulations pop up, or you learn lessons from internal incidents.

This continuous loop of monitoring, auditing, and refining is what ensures your AI governance framework stays effective for the long haul. By taking this phased approach, you can successfully operationalize governance, build trust, and truly unlock the potential of your AI investments.

Frequently Asked Questions About AI Governance

It's completely normal to have questions when you're setting up something as important as an AI governance framework. Even the clearest roadmap can't anticipate every concern. Let's tackle some of the most frequent questions I hear from leaders trying to get this right.

What’s the very first thing we should do to start AI governance?

Before you write a single policy, you need to establish clear ownership. The best way to do this is by forming a cross-functional AI governance committee. Pull in leaders from legal, IT, data science, and the business units that will actually be using the AI. This group's first job is to define your company's core AI principles. This ethical North Star aligns everyone on why you're building a governance framework and what lines your company simply won't cross.

How can a small business implement AI governance without a big budget?

You absolutely don't need a massive budget. Small businesses should take a pragmatic, lightweight approach by focusing on their highest-risk AI applications first. Instead of hiring a new team, assign governance responsibilities to existing staff working on AI. Leverage excellent free resources like the NIST AI Risk Management Framework as a starting point. The priority is to document processes, be transparent about AI use, and create straightforward checklists for data and model testing.

How often do we need to revisit our AI governance framework?

Your framework must be a living document. We recommend a formal review at least once a year or whenever there's a significant change. Triggers for a review include adopting new AI technologies (like generative AI), major new regulations (like GDPR updates), an AI-related security incident, or business expansion into new regions with different laws. While the formal review is annual, continuous monitoring of models for performance drift should be ongoing.

How does an AI governance framework fit with existing compliance programs?

Think of your AI governance framework as a specialized extension of your existing compliance programs like GDPR or HIPAA, not a replacement. It slots in to tailor general principles of data privacy and security to the unique challenges of AI. It specifically addresses risks that traditional frameworks don't cover, such as model bias, transparency in automated decisions, and the explainability of outputs, creating a unified and robust risk management strategy.

At Ekipa AI, we help you turn theory into practice. Our platform and our expert team are here to help you implement a solid AI governance framework that protects your business while you innovate responsibly. Discover how our AI strategy consulting can accelerate your journey.