A Practical AI Risk Management Framework For Healthcare

Discover a practical AI risk management framework for healthcare. Learn to build governance, define risks, and ensure patient safety for reliable AI adoption.

An AI risk management framework in healthcare is essentially a structured game plan. It combines governance, strict data and model controls, and ongoing oversight to make sure artificial intelligence is rolled out safely, ethically, and effectively. This isn't just about ticking boxes; it's about turning a powerful technology from a potential liability into a trusted clinical tool by getting ahead of risks to patient safety, privacy, and compliance.

Why AI Risk Management Is Critical in Modern Healthcare

Artificial intelligence has moved beyond the lab and is now actively shaping everything from patient diagnoses and treatment personalization to the nuts and bolts of hospital operations. But as AI becomes more embedded in clinical workflows, the stakes get higher. Once you look past the buzz, it becomes clear why having a formal system for managing AI risks is no longer a "nice-to-have"—it's a clinical imperative.

Without a structured approach, jumping into AI implementation can expose a healthcare organization to some serious blind spots. You could easily run into:

- Patient Safety Compromises: Imagine an algorithm in a diagnostic tool making a subtle error that leads to a misdiagnosis. Or a flawed system recommending a less-than-optimal treatment path. These aren't hypotheticals; they are real-world risks.

- Data Privacy Breaches: AI models thrive on massive amounts of sensitive patient data. This concentration of information creates new attack surfaces and vulnerabilities that could lead to HIPAA violations and, just as importantly, a complete breakdown of patient trust.

- Regulatory Non-Compliance: The regulatory landscape for AI in medicine is getting more complex by the day. Without a clear governance framework, it's easy to fall out of compliance, leading to hefty legal and financial consequences.

The Escalating Need for Proactive Governance

This isn't just theory. Top industry safety organizations are sounding the alarm. In a telling sign of the times, ECRI—a global patient safety organization—recently placed artificial intelligence at the very top of its annual list of the most significant health technology hazards.

They're not saying AI is bad; they're warning that deploying it without rigorous testing and oversight introduces profound, often hidden, risks to patients.

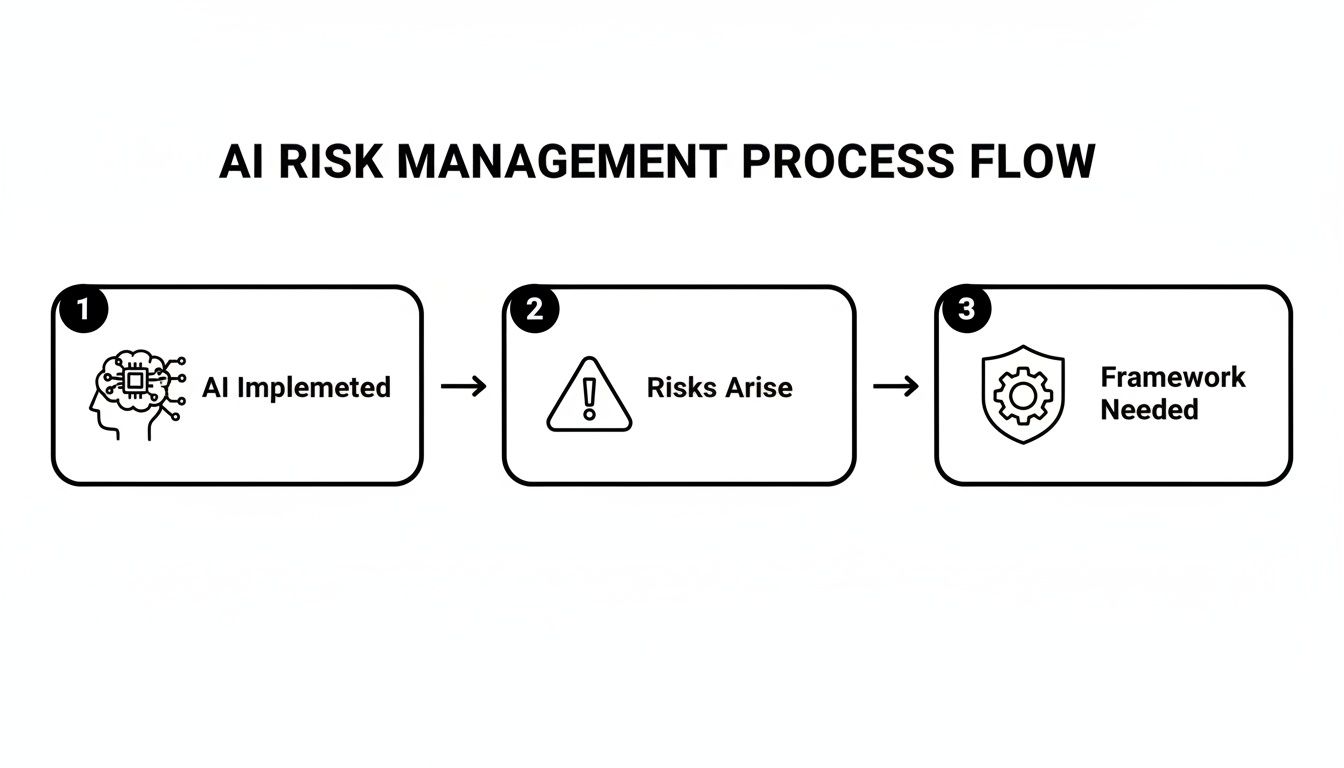

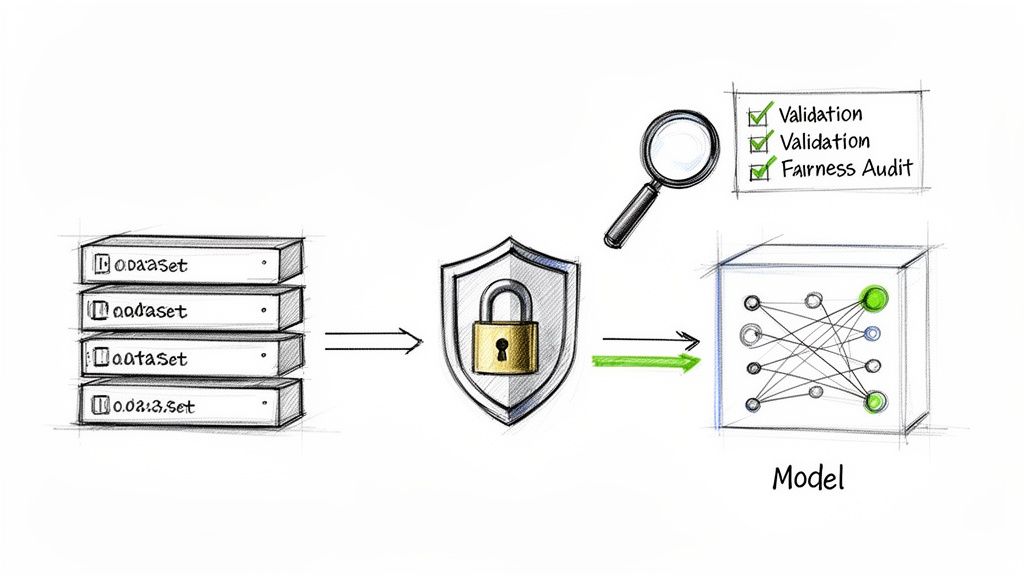

This flowchart maps it out perfectly: you implement AI, new risks naturally emerge, and a management framework becomes the essential bridge to ensuring the technology is both safe and effective.

What this shows is that risk isn't just a possibility; it's an inherent part of AI adoption. That's why building a protective framework from day one is non-negotiable.

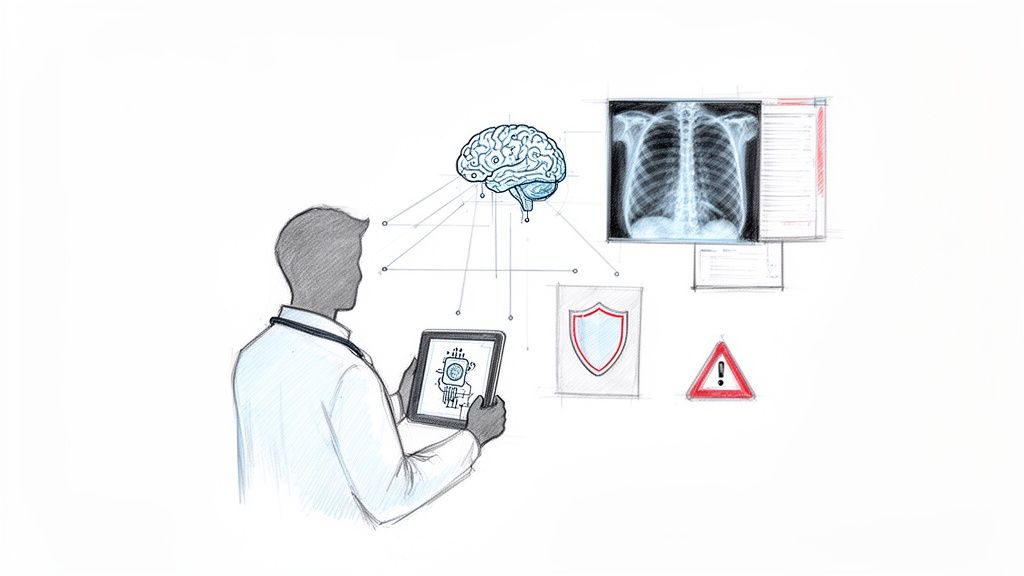

A Real-World Clinical Scenario

Let's make this tangible. Picture an AI tool designed to screen retinal images for early signs of diabetic retinopathy. If that algorithm was primarily trained on data from one demographic, it might be far less accurate when analyzing images from patients of other ethnic backgrounds.

This could lead to a wave of missed diagnoses and delayed treatments for an entire population. Without a solid risk management framework, this dangerous bias might not be discovered until after patients have already been harmed.

An effective framework would have mandated a fairness audit during development. It would require continuous performance monitoring across all patient demographics post-deployment and establish a clear, simple protocol for clinicians to flag suspected inaccuracies. It turns a potential crisis into a manageable, controlled process.

Building a robust framework starts with a solid foundation. A comprehensive Responsible AI guide provides the principles, and our specialized team at Healthcare AI Services can help you put those principles into practice, ensuring your AI initiatives are both innovative and incredibly safe.

Building Your AI Governance and Ownership Structure

Let's get straight to the point: you can't manage AI risk without clear accountability. It’s the bedrock of any serious AI risk management framework for healthcare. Without a defined structure for who owns what, even the most brilliant AI initiatives can drift into dangerous territory, leaving your patients and your organization exposed.

This isn't about adding layers of red tape. It’s about building responsibility directly into your organization's culture. The aim is to create a clear line of sight for every single AI system, from the moment it's just an idea on a whiteboard to the day it's retired. This is the kind of proactive thinking that separates successful AI adoption from a string of failed, risky experiments.

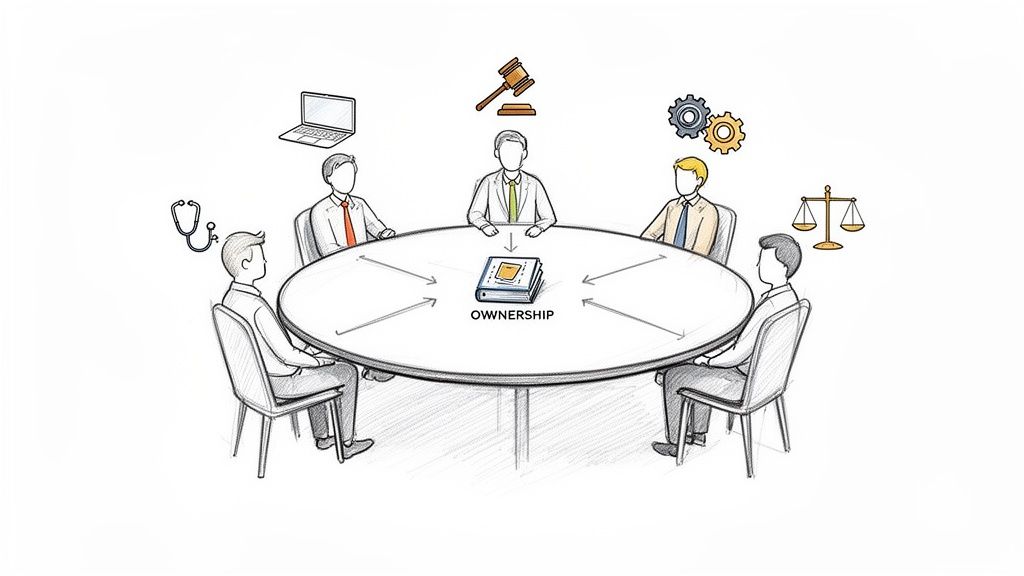

Assembling Your AI Governance Committee

Your first practical step is to put together a cross-functional AI governance committee. Think of this group as the central command for all things AI. They provide the necessary guidance, make the tough calls, and ensure you’re not making decisions in a vacuum. Honestly, one of the biggest threats I see in healthcare AI is siloed decision-making, and a diverse committee is your best shield against it.

Who should be in the room? You need a mix of experts who can see the puzzle from different angles:

- Clinical Leadership: Your Chief Medical Officer or a few senior physicians who live and breathe clinical workflows and can immediately spot potential patient safety issues.

- IT and Data Science: The CIO, CTO, or your top data scientists. These are the folks who know the technical realities, from data integrity to cybersecurity.

- Legal and Compliance: Your general counsel or compliance officers. They're the ones who will help you navigate the maze of HIPAA, FDA regulations, and the new wave of AI-specific laws.

- Operations and Administration: Leaders who can tell you exactly how a new AI tool will affect the day-to-day running of the hospital and its staff.

This committee isn't ceremonial. Their job is to draft, approve, and, most importantly, champion the organization's AI risk policies.

Defining Roles and Responsibilities

While the committee handles high-level oversight, real-world safety comes down to individual accountability. For every single AI tool you put into play, you need to assign unambiguous ownership. This prevents the all-too-common scenario where a problem pops up and everyone points fingers, assuming someone else is on it.

Here are the key roles to define for each AI system:

- The System Owner: This is the single, accountable person. Often a department head or clinical lead, they are ultimately responsible for the tool’s performance, safety, and value throughout its entire lifecycle.

- The Technical Lead: This individual is responsible for the nuts and bolts—the data feeds, model performance, and integration with your EHR and other systems. They’re the System Owner’s right hand on all things technical.

- The Clinical Champion: This needs to be a respected clinician who actually uses the tool. They become its advocate, a source of invaluable user feedback, and a key player in training their colleagues. They are your eyes and ears on the ground.

A clear ownership model means that when a doctor questions an AI-generated insight, they know exactly who to call. This creates a tight feedback loop, which is absolutely critical for quick incident response and making the tool better over time.

Documenting Decisions and Escalation Paths

Finally, good governance relies on good documentation. You need a transparent, auditable record of how and why critical decisions were made. Every major milestone—from choosing a vendor to approving a new clinical use case—should be formally documented by the governance committee.

Just as crucial is having a well-defined escalation path. What happens when a nurse on the night shift notices something strange, a potential bias or a safety concern? There must be a clear, no-blame process for them to flag it. That report needs to go straight to the System Owner and, if it’s serious enough, up to the governance committee for a swift resolution. Building this structure is a core part of our implementation support, because it ensures risks are caught and handled early, not after something has gone wrong.

Creating a Healthcare-Specific AI Risk Taxonomy

You can't manage what you can't define. That’s a fundamental truth in any complex field, but it’s especially critical when implementing AI in healthcare. Simply talking about "AI risk" is too broad to be useful. To get a real handle on potential problems, you need to build a detailed risk taxonomy—a shared vocabulary that allows everyone from clinicians to data scientists to pinpoint, discuss, and ultimately mitigate potential harm with precision.

Without this common language, "risk" just stays a vague, scary concept. A well-structured taxonomy, on the other hand, transforms abstract fears into concrete, actionable items. Think of it as the output of a thorough AI requirements analysis; it’s about mapping out the potential pitfalls long before a new tool ever touches a patient workflow.

This process isn't just an academic exercise. It's about breaking down risks into categories that make sense in a clinical environment. While every organization’s taxonomy will have its own nuances, most risks fall into four key domains.

H3: Clinical Safety Risks

This is, without a doubt, the most critical category. These are the risks that directly impact patient outcomes. When an AI model gets it wrong here, the consequences can range from diagnostic delays and incorrect treatments to serious adverse events.

Here are a few real-world examples I've seen play out:

- Diagnostic Blind Spots: An AI designed to spot skin cancer, trained primarily on images of light skin tones, could have a dangerously high rate of false negatives for patients with darker skin. This isn't a theoretical problem; it’s a well-documented failure mode that leads directly to missed diagnoses.

- Treatment Recommendation Errors: Imagine a system suggesting chemotherapy dosages that fails to account for a rare genetic marker. For a specific subgroup of patients, that recommendation could be toxic or completely ineffective.

- Alert Fatigue: In the ICU, an AI patient monitoring system that cries wolf too often with false positive alerts will eventually be ignored. Nurses become desensitized, creating a scenario where a genuinely critical event gets missed.

H3: Operational Disruption Risks

Not all AI risks are clinical. Some can completely cripple a hospital's day-to-day functions. These operational risks create workflow bottlenecks, frustrate staff, and can quietly degrade the quality of care over time. They often come from a poor understanding of how a new tool actually fits into existing processes.

A classic mistake is deploying sophisticated AI tools for business without fully mapping their impact on human workflows. The most advanced AI is a failure if it makes a clinician's job harder, not easier.

Consider these scenarios:

- Workflow Integration Failure: A new AI transcription service is introduced, but it doesn't integrate cleanly with the hospital's EHR. Suddenly, doctors are spending more time copying and pasting notes than they did before, which not only negates the time savings but also introduces new opportunities for data entry errors.

- Over-reliance and Skill Atrophy: If a radiology department becomes totally dependent on an AI for preliminary reads, what happens to the skills of its junior radiologists? They may not get the reps needed to spot subtle anomalies on their own, a critical skill when the system is down or a case is particularly complex.

H3: Data Integrity and Security Risks

Patient data is the lifeblood of healthcare, and it's protected by ironclad regulations like HIPAA. Risks in this bucket involve everything from unauthorized access and data corruption to privacy breaches that can shatter patient trust and lead to crippling legal penalties. The sophisticated data pipelines required for AI can introduce entirely new vulnerabilities if you're not careful, a core focus of any professional custom healthcare software development.

These risks can show up in a few nasty ways:

- HIPAA Violations: An AI model trained on patient data that hasn't been perfectly de-identified could inadvertently leak protected health information (PHI) through its outputs. Even worse, it might be vulnerable to attacks that can reverse-engineer the training data to re-identify individuals.

- Data Poisoning: This is a more insidious threat. A bad actor could intentionally feed a diagnostic AI corrupted or misleading data during its training phase. The result is a model that is subtly skewed, programmed to fail in specific, hard-to-detect ways that could lead to widespread misdiagnoses down the line.

H3: Ethical and Reputational Risks

Finally, we get to the complex challenges of fairness, equity, and transparency. Ethical failures don't just feel wrong; they cause tangible reputational damage, undermine the trust of the communities you serve, and can lead to discriminatory care—even if the AI is technically "accurate" for the majority of the population.

Here are two of the most common ethical tripwires:

- Algorithmic Bias: An AI tool designed to predict patient no-shows might learn to associate low-income ZIP codes with a higher risk. The system then "helpfully" starts deprioritizing people from those areas for appointment slots, systematically reducing their access to care.

- Lack of Transparency: Deploying a "black box" AI model for treatment planning is a recipe for trouble. If doctors can't understand why the AI is making a certain recommendation, they can't trust it. This creates enormous liability issues when an outcome is poor and no one can explain the clinical reasoning.

The following table provides a structured view of these risk categories, linking specific examples to their potential fallout for both patients and the healthcare organization.

| Healthcare AI Risk Taxonomy and Potential Impact |

|---|

| This table outlines common AI risk categories in healthcare, providing specific examples and mapping them to their potential impact across different stakeholder groups. |

| Risk Category |

| Clinical Safety |

| Diagnostic error (e.g., missed cancerous lesion in a scan) |

| Misdiagnosis, delayed treatment, adverse health outcomes |

| Malpractice lawsuits, loss of accreditation, damage to clinical reputation |

| Operational Disruption |

| Poor EHR integration causing data entry delays |

| Delayed care coordination, frustrating patient experience |

| Decreased staff productivity, increased operational costs, staff burnout |

| Data Integrity & Security |

| Inadvertent PHI leak from a model's output |

| Loss of privacy, potential for identity theft or fraud |

| Severe HIPAA fines, legal liability, erosion of patient trust |

| Ethical & Reputational |

| Biased algorithm unfairly flags a demographic for high-risk behavior |

| Systemic denial of care, discriminatory treatment, health inequities |

| Public backlash, loss of community trust, regulatory scrutiny |

By categorizing risks in this way, you move from a reactive, "what if" posture to a proactive stance. You can start assigning ownership, developing specific controls, and measuring the effectiveness of your mitigation strategies for each type of risk. This structured approach is the bedrock of a resilient AI governance program.

Putting Robust Data and Model Controls in Place

The performance of any AI system comes down to two things: the quality of its data and how thoroughly it's been tested. When we talk about a real-world AI risk management framework for healthcare, the technical controls you build around your data and models are the foundation. These are the practical guardrails that keep patient data safe, prevent biased algorithms from making unfair recommendations, and ultimately, make sure a clinician can actually trust what the AI is telling them.

These aren't just one-time checks you tick off a list. These controls have to be an integral part of the AI's entire lifecycle, from the moment you collect the first piece of data, through training and validation, and long after it’s been deployed. It's a constant state of diligence.

The healthcare industry has jumped into AI with both feet, and for good reason—85% of organizations are seeing a decent return on their investment. But that excitement is matched by some very real concerns. A recent survey highlighted that data quality (62%), getting the workforce ready (47%), and navigating regulations (42%) are still the biggest hurdles. These numbers make it crystal clear: strong controls aren’t just a nice-to-have, they're essential for any kind of lasting success.

Getting Data Privacy and Security Right

First things first: protecting patient data. This is about more than just basic HIPAA compliance; it means having a data governance plan built specifically for the unique pressures of AI development.

Your framework needs to enforce strict rules for:

- Data De-identification: Before any data ever touches a model, it has to be completely stripped of Personally Identifiable Information (PII) and Protected Health Information (PHI). This usually means going beyond simple redaction and using more sophisticated anonymization techniques.

- Access Control: Not everyone on your team needs to see the entire dataset. By implementing role-based access, you can ensure data scientists, engineers, and clinical validators only have access to the information they absolutely need to do their jobs.

- Secure Data Environments: All your model training and validation work should happen in isolated, secure sandboxes. This is non-negotiable for preventing data leaks and protecting your hard work from outside threats.

Think of your patient data as the most valuable—and most vulnerable—asset you have. Building a fortress around it isn't optional; it's the price of entry for doing AI in healthcare responsibly.

Tackling Algorithmic Bias Head-On

Algorithmic bias is one of the most dangerous risks in healthcare AI. A model that works perfectly for one group of people but fails another isn't just a technical problem—it's a tool that actively creates health inequity.

You have to get ahead of this, and that means a multi-pronged attack:

- Start with Representative Data: The work begins with your data. Your team has to actively seek out and curate training data that truly reflects the diversity of your actual patient population—across race, ethnicity, gender, socioeconomic status, and age.

- Run Fairness Audits: During development, you have to hunt for bias. This means stress-testing the model with segmented data to see if its accuracy and error rates hold up across different demographic groups. If you see a discrepancy, you have to dig in and fix it before moving on.

- Use Bias Mitigation Techniques: When you find bias, there are technical fixes. You can re-weight the data to give more significance to underrepresented groups or apply algorithmic adjustments to correct for skewed results.

This relentless focus on fairness is a core part of how we approach building AI—the goal is always to create tools that are not only accurate but also equitable.

Why Model Explainability and Validation Can't Be Skipped

A "black box" AI has no business being in a clinical setting. Period. For any clinician to trust a recommendation from an algorithm, they need to understand how it reached its conclusion. This is where Explainable AI (XAI) comes in.

XAI techniques peel back the layers and show which data points were most influential in a model's prediction. That kind of transparency is absolutely essential for building trust with clinicians and for doing a proper root cause analysis when something goes wrong.

As healthcare models grow more complex, the code they run on becomes a potential point of failure. That's why addressing issues in AI-generated code is now a crucial part of model control.

Finally, every single AI model needs to go through rigorous clinical safety validation. This is completely separate from technical validation. It means putting the model into a simulated clinical environment and letting the actual end-users—doctors, nurses, radiologists—put it through its paces with realistic scenarios. This step is the ultimate safety check for catching risks before the tool ever touches a real patient. For teams building out their validation capabilities, platforms like our own VeriFAI can provide the structure needed to make this critical process repeatable and reliable.

Navigating the Complex Regulatory Landscape

Healthcare AI doesn't operate in a vacuum. It lives within a dense, tangled web of regulations that you absolutely have to navigate with care. A solid AI risk management framework for healthcare must have compliance baked in from the very beginning, ensuring every new tool meets strict legal and ethical standards. Get this wrong, and you're not just facing a technical problem—you're risking patient safety, data security, and your organization's legal standing.

This landscape isn't standing still, either. The push for AI regulation is gaining serious momentum. Just look at the numbers: forty-seven US states have introduced over 250 AI-related bills affecting healthcare, and 33 have already passed into law. These laws are increasingly focused on risk management frameworks to find that sweet spot between life-saving innovation and essential patient safety. That's a critical balance to strike, especially when you consider that 86% of health systems are already using AI. You can dig deeper into these legislative trends over at the National Association of Counties.

Core Pillars of Healthcare AI Compliance

To make sense of this complex environment, I find it helps to focus on two foundational pillars of U.S. healthcare regulation that have a huge impact on AI. Mapping your framework's controls to these requirements isn't optional; it's how you stay compliant day in and day out.

-

FDA and Software as a Medical Device (SaMD): If your AI tool is designed to diagnose, treat, or prevent a disease, the U.S. Food and Drug Administration (FDA) is almost certainly going to classify it as Software as a Medical Device. This classification puts the tool under a microscope, demanding rigorous validation, quality management systems, and post-market surveillance. The FDA’s guidance for AI/ML-based SaMD is especially crucial. It recognizes that these models can learn and evolve, so you'll need a "predetermined change control plan" to manage updates safely.

-

HIPAA and Patient Data Protection: The Health Insurance Portability and Accountability Act (HIPAA) is the law of the land for Protected Health Information (PHI). Any AI system that touches patient data—whether it's processing, storing, or transmitting it—has to follow its strict privacy and security rules. That means things like robust access controls, encryption, and clear audit trails are non-negotiable.

The biggest mistake I see teams make is treating compliance like a final checkbox. You can't do that. You have to weave regulatory requirements directly into your AI Product Development Workflow. Your data privacy controls need to be designed with HIPAA in mind from day one, and your model validation process has to generate the evidence you might need for an FDA submission down the road.

Proactive Strategies for Staying Compliant

The regulatory environment is always in flux, with new state-level AI laws adding even more layers to an already complex system. You have to be proactive, not reactive. This is particularly true as you begin to scale your internal tooling and explore new real-world use cases.

Here are a few practical steps I recommend to stay on top of things:

- Appoint a Compliance Lead. Get a specific person or team within your AI governance structure to keep their finger on the pulse of the regulatory world. Their job is to track federal guidance and new state laws.

- Run Regular Compliance Audits. Don't wait for a problem to pop up. Periodically check your AI systems against current regulations to find gaps before they become critical failures.

- Lean on Specialists. Bringing in partners who specialize in custom healthcare software development can be a game-changer. They live and breathe this stuff, ensuring your solutions are built with compliance baked in from the first line of code, not just bolted on as an afterthought. This is a core part of our own Healthcare AI Services.

When you build these legal guideposts into your framework, compliance stops feeling like an obstacle and becomes a natural outcome of doing good, responsible work. If you need a hand building this capability, feel free to connect with our expert team.

Keeping a Watchful Eye: Monitoring and Incident Response

An AI risk management framework isn't a one-and-done document you file away. It's a living, breathing system. The moment an AI tool goes live in a clinical setting, your focus has to shift to constant vigilance and having a rock-solid plan for when things inevitably go sideways. This is where the rubber meets the road.

Good monitoring is so much more than just a system-is-up-or-down check. You need to be tracking the right key performance indicators (KPIs) that act as your canary in the coal mine. You’re not just looking for catastrophic failures; you’re hunting for the subtle drifts and shifts that whisper a problem is on the horizon.

What to Actually Monitor Once an AI is Deployed

To keep your AI tools safe and effective, your team needs to have its eyes on a few critical areas. This isn’t a task to be checked off a list; it's an ongoing process of observation and analysis.

- Model Performance Drift: This is a big one. It's when a model's accuracy slowly erodes over time. This happens because real-world patient data and clinical practices are always changing, making the data the model was originally trained on feel stale.

- Input Data Shifts: You have to watch the data being fed into the model like a hawk. Has your patient population changed? Are you using new imaging equipment? A major shift in the input data can make a once-perfectly validated model start spitting out garbage.

- Anomalous Outputs: Your system should have automated alerts that scream when an output looks weird or falls outside of expected ranges. These strange results are often the very first sign of a technical glitch, a broken data pipeline, or even a new clinical situation the model has never encountered.

We've found that setting up real-time dashboards to track these metrics is non-negotiable. This kind of proactive oversight is a core part of our AI Automation as a Service, because it's the only way to ensure AI tools stay trustworthy long after the go-live party.

Your AI-Specific Incident Response Playbook

When an alert fires or a doctor reports a problem, you can't afford to scramble. A generic IT incident plan just won't work here; you need a playbook built specifically for the unique quirks of AI.

Your response plan has to be designed for speed and clarity. The absolute priority is to immediately contain any potential patient harm, figure out what went wrong, and communicate clearly with everyone involved—from the doctors on the floor to the governance committee.

A solid AI incident response plan must clearly define:

- Containment: What are the first-response steps? This could be anything from pulling the AI tool offline immediately, rolling back to a previous stable version, or simply forcing all its outputs to be manually reviewed by a human until you sort things out.

- Root Cause Analysis: You need a systematic way to dig in and find the "why." Was it a data problem? A flaw in the model itself? Or maybe an issue with how the tool was integrated into the workflow? Getting to the root cause is the only way to prevent it from happening again.

- Correction and Redeployment: Lay out the exact steps for fixing the issue, re-validating the model (this is crucial), and safely bringing it back into the clinical workflow.

- Communication: Who gets told what, and when? Create a clear protocol. This chain should include the clinicians who rely on the tool, the system owner, the AI governance committee, and in some cases, you might even have to notify regulatory bodies.

Building these monitoring and response protocols is what makes an AI risk management framework for healthcare truly resilient. If you need some help implementing these critical safeguards, feel free to connect with our expert team.

Common Questions on AI Risk Management in Healthcare

As you start to weave AI into your clinical operations, a lot of important questions are going to come up. It's only natural. Here are some of the most common ones we hear from healthcare leaders and technical teams, along with some straightforward answers.

Where’s the Best Place to Start?

It’s easy to get bogged down, so start small and focused. The first real step is pulling together a dedicated governance committee. You'll want a mix of people in the room: clinicians, IT folks, your legal counsel, and key administrators. Their first job? To hash out and agree on your organization's guiding principles for using AI responsibly.

Once you have those principles, don't try to boil the ocean. Pick one high-value, but manageable, pilot project. This gives you a real-world testing ground to build and refine your risk framework before you even think about rolling it out more widely. Getting some early guidance from an AI strategy consulting partner can help you nail this first project and avoid common pitfalls.

How Do We Actually Deal With Algorithmic Bias?

This is a big one, and there's no magic bullet. Tackling bias is a continuous commitment, not a one-and-done task.

It all starts with your data. You absolutely must ensure the data you train your models on is a true reflection of your patient community. If it isn't, bias is almost guaranteed. As you build the model, you have to actively check for it using fairness metrics to spot any performance dips across different patient groups (like age, ethnicity, or gender).

But the work doesn’t stop at launch. You need to have systems in place to constantly monitor for any new biases that crop up over time. Pairing this with explainability tools that show why a model made a certain decision is a critical part of any responsible AI solutions.

What Are the Key Regulations We Can’t Afford to Ignore?

The regulatory landscape depends entirely on what the AI tool actually does. If it's helping with diagnosis or suggesting treatments, you're almost certainly looking at the FDA's Software as a Medical Device (SaMD) rules. And, of course, any tool that touches patient information has to be rock-solid on HIPAA compliance.

On top of that, more and more states are rolling out their own AI-specific laws around transparency and accountability. This is not an area to guess. Getting your legal and compliance experts involved from day one is non-negotiable.

How Can We Get Our Clinicians to Trust and Use These Tools?

Trust isn't something you can mandate; you have to earn it. The key ingredients are transparency, reliability, and most importantly, inclusion.

Bring clinicians into the conversation from the very beginning—when you're still just evaluating or designing the tool. They need to be part of the process, not have it dictated to them.

Provide crystal-clear training on what a specific AI tool does well and, just as crucially, what its limitations are. This isn't a black box. Use explainability (XAI) features that allow a doctor to see the reasoning behind an AI-generated insight. Finally, create an easy, no-fuss way for them to give feedback or flag problems. When they feel like partners in ensuring patient safety, adoption happens naturally. If you need help structuring this, it's often a good idea to connect with an expert team.

What's the difference between AI governance and AI risk management?

Think of AI governance as the overall rulebook and AI risk management as the specific game plan for enforcing those rules. Governance sets the high-level principles, policies, and ownership structure. Risk management is the hands-on process of identifying, assessing, and mitigating specific threats within that governance structure. You can't have effective risk management without strong governance.

How often should we review our AI risk framework?

Your AI risk framework should be a living document, not something that collects dust. A good practice is to conduct a formal review at least once a year. However, you should also trigger a review anytime there's a significant change, such as deploying a major new AI system, a change in regulations, or after a serious incident.

Can we use a third-party AI tool, or do we need to build our own?

You can absolutely use third-party AI tools, but it doesn't absolve you of responsibility. Your risk management framework must include a rigorous vendor assessment process. You need to scrutinize their data security, model validation processes, and how they handle bias. Make sure your contract clearly defines liability and grants you the right to audit their systems. As we explored in our AI adoption guide, choosing whether to buy or build is a critical strategic decision. If you decide to buy, you can always generate a Custom AI Strategy report to evaluate vendors.