A Practical Guide to Building Scalable AI Solutions for Healthcare

Discover a practical framework for building scalable AI solutions for healthcare, focusing on governance, clinical validation, and delivering measurable ROI.

Building scalable AI solutions for healthcare isn't about chasing the latest tech trend; it's a methodical process that marries real clinical needs with smart technical execution. It all starts with a clear strategic foundation. You have to identify the high-impact problems and make sure they line up with your organization's goals before a single line of code is written. Honestly, this initial planning is the single most critical factor for long-term success and delivering real value in such a complex, regulated field.

Building Your Strategic Foundation for Healthcare AI

Any successful AI project in healthcare begins with a rock-solid strategy. This groundwork is what prevents costly detours and ensures the final product actually solves a genuine problem for clinicians or patients. The journey from a vague idea to a concrete plan starts by deeply understanding your requirements and aligning projects with core goals—whether that’s improving patient outcomes, making operations smoother, or speeding up research.

This foundational stage is all about asking the right questions from day one. It's not just about the technology; it's about solving real-world problems. I've seen the initial excitement around AI lead teams to jump into complex projects without first confirming if they're even practical or valuable.

A common mistake is to chase the most advanced technology instead of the most pressing problem. The most effective AI solutions are often the simplest ones that solve a significant, everyday pain point for healthcare professionals.

Defining Clear Objectives and Use Cases

First things first: you need to move beyond the buzzwords. What does "AI" actually mean for your organization? What specific challenges are you trying to solve? Vague goals like "improving efficiency" won't cut it because you can't measure them. Get specific and focus on tangible outcomes.

A few examples of well-defined objectives:

Reduce diagnostic errors in radiology reports by 15%.

Automate patient appointment scheduling to free up 20 hours of administrative staff time per week.

Predict 30-day hospital readmission risks for cardiac patients to trigger early intervention.

Shorten the pre-clinical phase of drug discovery by analyzing genomic data more rapidly.

This level of detail is essential. When an objective is this clear, it's far easier to measure success, build a business case, and get everyone on board. Crafting a Custom AI Strategy report always starts with this kind of deep dive into what the organization truly needs.

Prioritizing Your AI Use Cases

Once you have a list of potential projects, you have to prioritize them. A good way to do this is by mapping them against key criteria like clinical impact, feasibility, and potential return. This helps you focus on the initiatives that will deliver the most value, a crucial step when resources are finite.

Here’s a simple framework to help guide that conversation:

Use Case Prioritization Matrix for Healthcare AI

| Priority Level | Example Use Case | Clinical Impact | Technical Feasibility | Data Availability | Estimated ROI |

|---|---|---|---|---|---|

| High | AI-assisted diabetic retinopathy screening | High (prevents blindness) | High (proven models) | Good (retinal scans) | High |

| Medium | Predicting patient no-shows | Medium (improves efficiency) | High (standard ML) | Good (scheduling data) | Medium |

| Medium | NLP for summarizing clinical notes | High (reduces burnout) | Medium (requires custom tuning) | Variable | Medium-High |

| Low | Real-time sepsis prediction | Very High | Low (complex, high stakes) | Challenging (needs real-time data) | High (if successful) |

This matrix isn't set in stone, but it forces a structured discussion. A project might have a massive potential impact, but if you don't have the data or technical expertise, it’s not the right place to start.

Aligning AI Initiatives with Business Goals

Every AI project must directly support the organization's broader mission. If it doesn't, it's a distraction, no matter how technically impressive.

The recent explosion in healthcare AI adoption really drives this point home. By 2025, an estimated 63% of healthcare professionals will be actively using some form of AI. Their top goals? Speeding up R&D (24%), improving patient outcomes (22%), and delivering better clinical insights (22%). This shows that leading organizations are laser-focused on directing their investments toward what matters most.

By grounding your AI roadmap in clear, measurable business objectives, you create a powerful story that helps secure funding and drives adoption down the line. It ensures that every dollar and every hour you invest contributes directly to the organization's long-term vision.

Mastering Data Governance and Regulatory Compliance

In healthcare, data is a double-edged sword. It’s your most powerful asset for innovation, but it's also your greatest liability. If you're building AI solutions for healthcare, you simply can't afford a casual approach to data governance. This goes way beyond just ticking compliance boxes—it's about building a foundation of trust with patients, clinicians, and regulators. One mistake can trigger massive legal penalties and, even worse, destroy confidence in your technology.

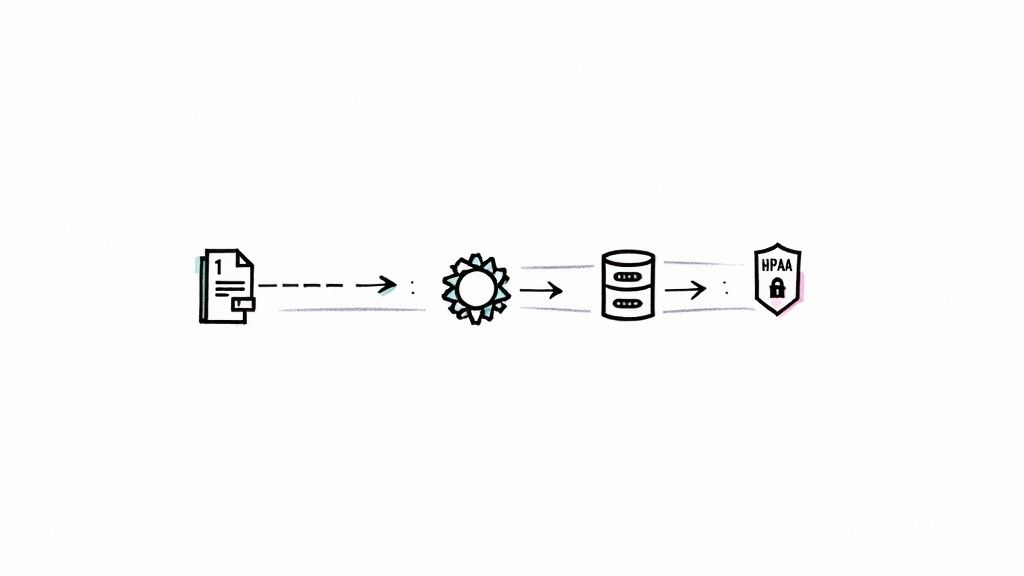

From the moment data is ingested and anonymized to how it's stored and accessed, the entire AI model lifecycle has to be built with security and privacy at its core. It’s non-negotiable. This is the bedrock of trust that drives clinical adoption and is a crucial part of any serious custom healthcare software development effort.

Building a Secure and Compliant Data Pipeline

Think of your data pipeline as the circulatory system for your AI initiative. Its primary job is to protect sensitive information at every single stage. That process starts with ironclad access controls, making sure only authorized personnel can ever see or handle patient data.

A truly compliant pipeline needs several key components:

Secure Ingestion: Data must be encrypted from the moment it enters your system—both in transit and at rest. No exceptions.

Anonymization and De-identification: Before any data is fed into a training model, all personally identifiable information (PII) has to be stripped out or masked. This is fundamental to protecting patient privacy.

Role-Based Access Control (RBAC): You need to implement strict permissions that limit who sees what. A researcher training a model, for example, has no business seeing the same raw data as a physician actively treating a patient.

Auditable Logs: Keep detailed, unchangeable logs of every single data access and modification. This gives you a clear audit trail for compliance checks and helps immensely if you ever need to investigate a security incident.

These are the technical guardrails that help you safely navigate the complex web of regulations governing healthcare data.

Navigating the Regulatory Maze from HIPAA to the AI Act

Compliance in healthcare is anything but static. While HIPAA (Health Insurance Portability and Accountability Act) has been the gold standard in the U.S. for years, new regulations are cropping up worldwide to address the unique challenges AI presents. For example, getting documented consent for everything is critical, which is why tools like a HIPAA Privacy Authorization Form Template are so important.

The EU's AI Act is a perfect example, setting a new global benchmark for AI, especially in high-risk fields like medicine. Your governance framework can't be set in stone; it has to be agile enough to adapt to these evolving legal demands.

Building for compliance isn't a one-and-done task. It's a continuous cycle of monitoring, auditing, and adapting. Your governance strategy has to be as dynamic as the regulations themselves.

The global healthcare system is under enormous pressure. The World Health Organization is projecting a staggering shortfall of 10 million healthcare workers by 2030, a gap that AI is uniquely positioned to help close. In response, regulators like the FDA and the European Union are working quickly to create rules that ensure AI is deployed safely and ethically. This is happening right as over 40% of health systems are already seeing a tangible return on their AI investments.

This intense regulatory focus highlights why a proactive compliance strategy is so critical. Instead of seeing these rules as roadblocks, think of them as a blueprint for building AI solutions that people can trust. Getting this right from the beginning is far more effective—and cheaper—than trying to bolt on compliance after the fact.

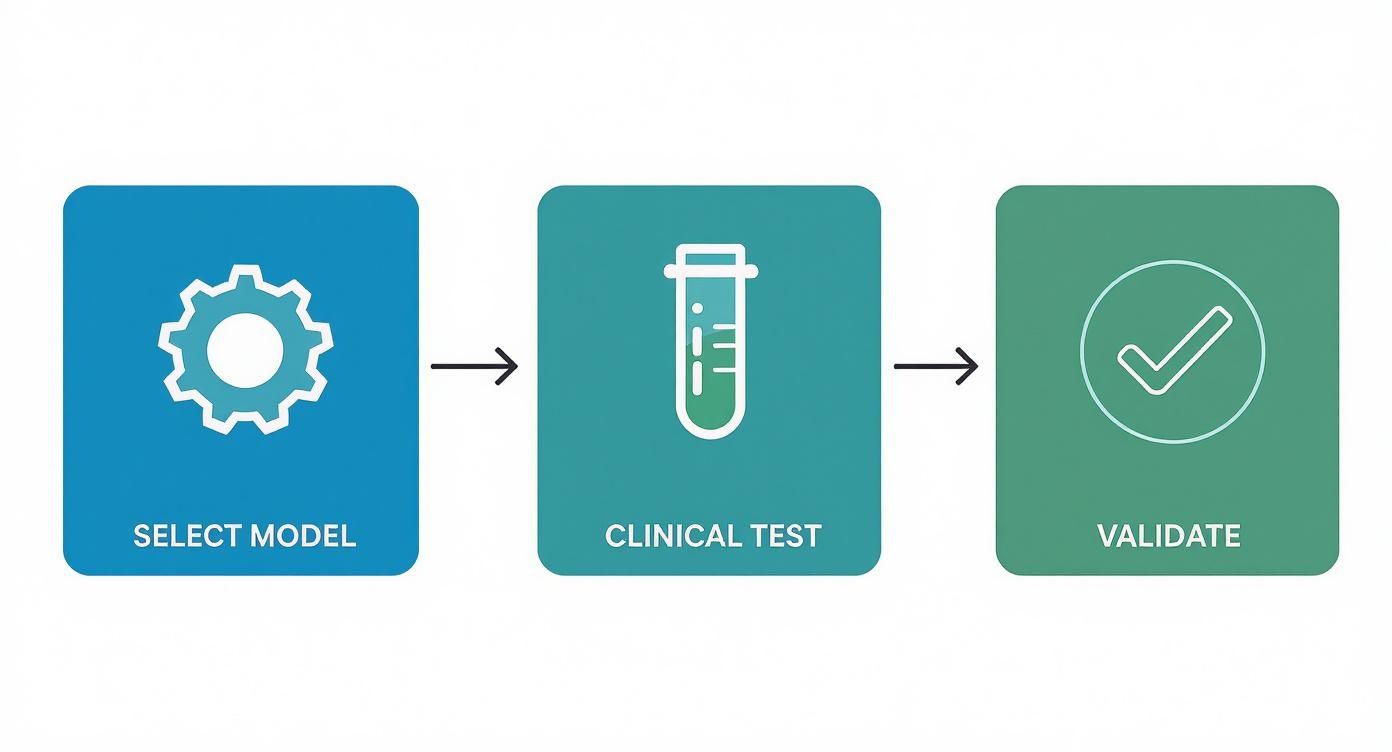

Designing and Validating Clinically Relevant AI Models

With a solid strategy and your data governance sorted, it's time to roll up our sleeves and get into the core technical work. This is where the rubber meets the road—where we move from theory to the messy reality of clinical practice. The focus sharpens to selecting, building, and, most importantly, rigorously validating AI models that can withstand the unique pressures of healthcare.

Let's be clear: the goal isn't just a model with high technical accuracy. We’re building tools that clinicians must be able to trust, day in and day out, to make better decisions for their patients. It’s a delicate balancing act between raw algorithmic performance and real-world clinical value.

This entire process is a central part of how we approach building AI products. We've seen firsthand that without this balance, even the most brilliant algorithm is destined to fail in a clinical setting.

Choosing the Right Model for the Job

Your first big technical decision is picking the right kind of model. The AI landscape is huge, and there's no silver bullet. The choice almost always boils down to two things: the kind of data you have and the problem you're trying to solve.

Traditional Machine Learning: Don't overlook the classics. Models like logistic regression or random forests are often the best choice for structured data problems. Think predicting patient no-shows from scheduling data or flagging high-risk individuals from their electronic health records (EHR). Their biggest advantage? They're far easier to interpret, which is a massive win in any clinical environment.

Deep Learning: When you’re dealing with unstructured data—like medical images, pathology slides, or messy clinical notes—you need more firepower. This is where deep learning models like Convolutional Neural Networks (CNNs) and Transformers shine. They can spot incredibly complex patterns that older methods would miss entirely.

For more advanced models, especially those used for clinical decision support, it's also worth getting familiar with techniques like Retrieval-Augmented Generation (RAG). This approach helps ground an AI’s outputs in verified, up-to-date knowledge bases, which is absolutely critical for ensuring the information is trustworthy.

Moving Beyond Accuracy in Model Validation

In healthcare, "accuracy" alone is a dangerously misleading metric. I've seen models boast 99% accuracy for detecting a rare disease, which sounds incredible on paper. But when you dig in, you find it achieved that score by simply predicting "no disease" for every single patient. That's not just useless; it's actively harmful.

We have to look deeper, using metrics that actually reflect clinical reality.

A few you absolutely must track:

Sensitivity (Recall): How good is the model at finding the people who actually have the condition? For any kind of screening tool, high sensitivity is non-negotiable. You can't afford to miss cases.

Specificity: How good is the model at correctly identifying people who don't have the condition? High specificity is key to minimizing false alarms, which cause needless anxiety for patients and trigger expensive, unnecessary follow-up tests.

Precision: When the model says a patient is positive, what’s the probability that it's right? This tells you how much you can trust a positive flag.

The ultimate test of any model isn't its performance in a sterile lab environment. It's whether it genuinely improves clinical decision-making at the point of care. If a clinician doesn't trust it, the algorithm has failed, period.

To help you navigate this, here’s a quick breakdown of common validation methods and where they fit best.

Comparing AI Model Validation Techniques in Healthcare

This table provides an overview of different validation methods and their suitability for various healthcare AI applications to ensure model robustness and clinical relevance.

| Validation Method | Description | Best For | Pros | Cons |

|---|---|---|---|---|

| Cross-Validation | Splitting data into 'k' subsets; training on k-1 and testing on the remaining one, repeated k times. | General model performance assessment on a fixed dataset. | Robust estimate of performance; reduces overfitting. | Computationally expensive; assumes data is independent. |

| External Validation | Testing a model on a completely new dataset from a different source (e.g., another hospital). | Assessing generalizability and real-world performance. | Gold standard for proving a model works beyond its training data. | Difficult to obtain external data; data drift can be an issue. |

| Prospective Study | Deploying the model in a live clinical setting and evaluating its performance on new, incoming patients. | Confirming clinical utility and workflow integration before full rollout. | Measures true real-world impact and user adoption. | Expensive, time-consuming, and requires ethical approvals. |

| Adversarial Testing | Intentionally feeding the model noisy or manipulated data to test its stability and failure points. | Safety-critical applications where robustness is paramount (e.g., diagnostic imaging). | Uncovers hidden vulnerabilities and edge cases. | Can be complex to design effective adversarial attacks. |

| Fairness & Bias Audits | Analyzing model performance across different demographic subgroups (e.g., age, race, gender). | All patient-facing models to ensure equitable outcomes. | Identifies and helps mitigate harmful biases. | Requires comprehensive demographic data, which may not be available. |

Each of these methods tells you something different about your model. A truly robust validation plan will likely use a combination of them to build a complete picture of the model's strengths and weaknesses.

The Critical Role of Explainability and Clinical Trials

For a clinician to ever trust an AI recommendation, they have to understand why the model is making it. This is where Explainable AI (XAI) becomes essential. XAI techniques crack open the "black box" to reveal the evidence behind the conclusion—whether it’s highlighting specific pixels in an MRI or certain phrases in a doctor's note. This transparency is the bedrock of trust.

Ultimately, nothing beats a well-designed clinical study. This means putting the model to the test in a real-world setting, often head-to-head against the current standard of care. These studies are what separate a cool tech demo from a validated medical device, proving not just accuracy but tangible, clinical utility.

Managing this entire lifecycle—from initial validation to ongoing monitoring—is a massive undertaking. It's why we built our own platform, VeriFAI, specifically to help teams navigate these complexities. This kind of methodical, rigorous validation is what it takes to turn a promising algorithm into a reliable tool that doctors can depend on to improve patient care.

Implementing MLOps for Scalable and Reliable Deployment

Getting a promising AI model out of the lab and into a live clinical setting is a massive jump. What works perfectly in a controlled research environment often breaks when faced with the messy, unpredictable reality of patient care. This is precisely where MLOps (Machine Learning Operations) becomes your most critical discipline.

Think of MLOps as the operational backbone that turns a static algorithm into a dynamic, trustworthy system built for the long haul. It's the collection of practices that automates and standardizes the entire machine learning lifecycle—from wrangling data and training models to deployment and, most importantly, ongoing monitoring. In a regulated field like healthcare, this systematic approach isn't just a good idea; it's a necessity.

Core Components of a Healthcare MLOps Pipeline

A well-designed MLOps pipeline for healthcare is about much more than just pushing code. Its real job is to enforce safety, reliability, and compliance at every single step. It creates a repeatable, auditable process that can handle growing data volumes and user demand, whether your infrastructure is on-premise or in the cloud.

Here's what that looks like in practice:

Automated Data Validation: Before new data even gets near your model, the pipeline should automatically check it for quality issues, formatting errors, and statistical drift.

Reproducible Model Training: The entire training process needs to be scripted. This means you can retrain your model on new data with the push of a button and get consistent, predictable results every time.

Model Versioning and Registry: Treat your models like software. Every version should be tracked, logged, and stored in a central registry, making it easy to see what changed and roll back to a previous version if something goes wrong.

Staged Deployment: Never flip a switch and release a new model to everyone at once. Use careful strategies like canary releases or A/B testing to roll out updates to a small group of users first, watch for issues, and then expand.

The workflow for getting a model from the drawing board to a validated state is a core part of this MLOps cycle.

This process highlights a crucial point: building a model isn't a one-and-done task. It’s a continuous loop of selection, testing, and validation that demands systematic management.

Continuous Monitoring for Long-Term Safety and Efficacy

Once your model is live, the real work begins. The world changes, and so does the data your model sees in production. This slow, often invisible change is called data drift, and it can quietly degrade your model's performance over time—a risk you simply can't afford in a clinical setting.

The most dangerous model is the one you assume is still working perfectly. Without continuous monitoring, you're flying blind, and patient safety is at risk.

This is why continuous monitoring is a non-negotiable part of MLOps. Your systems need to be on constant alert, watching for red flags like:

Performance Degradation: Are key metrics like accuracy, sensitivity, or specificity dipping below the established safety thresholds?

Data Drift: Does incoming patient data look statistically different from the data the model was originally trained on?

Concept Drift: Have the fundamental relationships in the data changed? For instance, a new clinical guideline or treatment protocol could change patient outcomes in a way the model doesn't understand.

When an alert is triggered, your MLOps pipeline should make it simple to diagnose the issue, retrain the model with fresh data, and safely deploy the updated version. This end-to-end oversight is what a mature AI Product Development Workflow delivers. By building a strong MLOps framework from the start, you ensure your AI solutions remain effective, safe, and compliant for their entire lifecycle.

Driving Clinical Adoption and Measuring Real-World ROI

You can build the most accurate, sophisticated AI model in the world, but if clinicians don’t trust it and won’t use it, it’s worthless. After all the hard work of nailing down the strategy, data governance, and MLOps, you’ve reached what is often the most human—and most difficult—hurdle: adoption.

The reality is that building AI for healthcare is as much about change management as it is about machine learning. The goal isn’t just to deploy a new tool; it's to seamlessly weave it into existing clinical workflows without adding another layer of complexity or contributing to the already high levels of burnout.

This comes down to a delicate balance of intuitive design, practical training, and a rock-solid feedback loop that makes your end-users feel heard. When clinicians see how a tool genuinely helps them and feel their input matters, they transform from skeptics into your biggest advocates.

From Resistance to Reliance: Strategies for Clinical Buy-In

Getting clinicians on board doesn’t start on launch day. It starts way back in the development process by bringing them into the fold early and often. When people have a hand in shaping the tools they’ll be using every day, they develop a sense of ownership that you simply can't force later on.

A few strategies have proven to make a huge difference in my experience:

Make It Part of the Workflow: The AI has to feel like a natural extension of the EMR or whatever system they’re already in. If it’s another login, another screen, another password to remember? Forget it. The path of least resistance always wins in a busy hospital.

Keep the UI Clean and Simple: Clinicians are drowning in data. Your interface needs to be a lifeline, not another firehose. It should present insights clearly and make the next step obvious and actionable.

Train for the Role, Not the Tool: One-size-fits-all training is a waste of everyone’s time. A radiologist cares about different features than a nurse or a care manager. Create short, role-specific training sessions that focus on solving their specific pain points.

Create Obvious Feedback Channels: Make it incredibly easy for users to report a bug, ask a question, or suggest an improvement. More importantly, act on that feedback quickly. It’s the single best way to show you respect their expertise.

This human-centered approach is central to our AI strategy consulting because we’ve learned firsthand that technology alone is never the complete answer.

Measuring What Matters: A Holistic ROI Framework

To keep your project funded and get buy-in for the next one, you have to prove its value. But in healthcare, ROI is about so much more than just dollars and cents. A truly comprehensive framework measures the total impact—across clinical outcomes, operational efficiency, and even user satisfaction.

The best way to build momentum is by showcasing these benefits with hard data from real-world use cases. Your measurement plan should track a mix of metrics.

| Metric Category | Example Metrics to Track |

|---|---|

| Clinical Outcomes | - Reduced diagnostic error rates |

| - Improved patient risk stratification accuracy | |

| - Decreased rates of hospital-acquired infections | |

| Operational Efficiency | - Time saved per patient encounter |

| - Optimized bed turnover rates | |

| - Reduced administrative workload | |

| Financial Impact | - Lower 30-day readmission penalties |

| - Reduced length of hospital stay | |

| - Cost savings from automated tasks | |

| User Satisfaction | - Clinician satisfaction scores (e.g., Net Promoter Score) |

| - System Usability Scale (SUS) ratings | |

| - Qualitative feedback from user interviews |

A successful AI solution doesn't just make the hospital's balance sheet look better; it makes a clinician's day less stressful and a patient's outcome more certain. That’s the true measure of ROI.

The healthcare sector, once a digital laggard, is catching up fast. Providers, especially large health systems, are funding roughly $1 billion of the total $1.4 billion spent on healthcare AI. Yet despite this investment, adoption still hovers around 35%, and some projections see it leveling off near 40%.

What does this tell us? It means the early adopters are all in, but winning over the majority requires showing undeniable, multifaceted value. This framework gives you the evidence to make that case. By tying your AI initiatives to tangible improvements, you build a powerful story that justifies the investment and clears the path for scaling your AI solutions across the entire organization. These are the very insights our expert team uses to refine and push healthcare AI projects forward.

Let's Get Building

Bringing a healthcare AI solution from a brilliant idea to a real-world, scalable tool is a serious undertaking. It’s definitely more of a marathon than a sprint. What I’ve seen work time and again is a careful blend of a clear strategic vision, solid technical execution, and—most importantly—a genuine understanding of what clinicians actually need. The path is complex, no doubt about it, but the payoff in creating a smarter, more equitable healthcare system is immense.

Following a clear playbook is key. You have to nail everything from the initial strategy and data governance to building a reliable MLOps pipeline and ensuring the final product actually fits into a doctor's or nurse's workflow. As we explored in our AI adoption guide, the human side of this is just as critical as the code.

It's a lot of work, but the impact is absolutely worth it.

If you're ready to get started, you might find a Custom AI Strategy report helpful to map out your first steps. Or, if you need to move faster, options like AI Automation as a Service can accelerate your progress. Of course, if you'd rather have a direct line for guidance, our expert team is always here to help you cut through the complexity and hit your goals.

FAQs: Your Questions on Building Scalable AI in Healthcare

1. What is the single biggest hurdle when building AI solutions for healthcare?

The biggest challenge is data. Securing high-quality, clean, accurately labeled datasets that comply with privacy regulations like HIPAA is a significant initial hurdle. Beyond data, ensuring the AI tool seamlessly integrates into existing clinical workflows without causing disruption is critical for adoption.

2. How can we ensure an AI model is fair and unbiased?

Ensuring fairness is an ongoing process. It begins with using diverse and representative training data to avoid perpetuating societal biases. During development, it involves using algorithmic fairness tools and regular audits to check for performance discrepancies across demographic groups. Post-deployment, continuous monitoring and Explainable AI (XAI) techniques are essential to maintain fairness and allow clinicians to question biased outputs.

3. Why is MLOps so important for healthcare AI?

MLOps (Machine Learning Operations) is critical because it provides the operational backbone for deploying, monitoring, and maintaining AI models safely and at scale. In a high-stakes environment like healthcare, MLOps automates updates, monitors for performance degradation (model drift), and ensures that every process is auditable and reproducible, which is essential for patient safety and regulatory compliance.

4. How do you measure the ROI of a clinical AI tool?

Measuring ROI in healthcare goes beyond financial returns. A holistic approach includes tracking:

Clinical Outcomes: Improvements in diagnostic accuracy, reduced complication rates, and better patient outcomes.

Operational Efficiency: Time saved by clinicians, optimized resource utilization, and reduced administrative burdens.

User Satisfaction: High adoption rates and positive feedback from clinical staff.

Financial Impact: Cost savings from automation, reduced readmission penalties, and shorter hospital stays.

5. What are the key steps to driving clinical adoption of AI tools?

Driving adoption starts with involving clinicians early in the development process. Key strategies include integrating the AI tool seamlessly into existing workflows (like the EMR), designing a clean and intuitive user interface, providing role-specific training, and establishing clear channels for user feedback that is acted upon promptly.

Ready to build a robust, scalable AI solution for your healthcare organization? Ekipa AI delivers tailored AI strategies and end-to-end execution to turn your vision into reality. Explore our AI Strategy consulting tool or connect with our expert team to get started.