Clinician AI Adoption Challenges: Overcome Barriers and Succeed

Confront clinician AI adoption challenges with practical steps to streamline workflows, build trust, and improve outcomes.

The real challenge with getting clinicians to adopt AI isn't a lack of interest—it's the massive gap between their enthusiasm for its potential and the reality of using it day-to-day. The biggest hurdles are tools that disrupt workflows, a fundamental lack of trust in "black box" algorithms, and ongoing worries about data security. To get past these, the focus has to shift to the clinician's actual experience.

The AI Paradox in Modern Healthcare

There's a strange disconnect happening in medicine right now. We hear endlessly about how AI will revolutionize patient care, but if you walk into an actual exam room, its presence is minimal. This is the exact problem so many healthcare organizations are facing: everyone is talking about AI, but very few are actually using it where it counts—with patients. This isn't just a technology problem; it’s a human problem.

This gap isn’t just a feeling; the numbers back it up. A recent survey from HLTH and Elsevier Health paints a clear picture of the disconnect between general interest and true clinical integration.

Clinician AI Adoption: The Gap Between Interest and Practice

The table below shows that while many clinicians have dabbled in AI, very few are trusting it for the critical work of patient care.

| Metric | Percentage of Clinicians |

|---|---|

| Have used some form of AI for work | 48% |

| Currently use AI for clinical decision-making | 16% |

These figures tell a crucial story: simply using a tool for administrative tasks doesn't build the confidence needed for high-stakes medical decisions. It points directly to deep-seated institutional barriers and a major need for better training. You can explore the full survey findings on the clinician AI adoption gap to see the data for yourself.

Navigating the Adoption Disconnect

At its heart, the problem comes down to how these tools are dropped into an already overloaded clinical environment. Clinicians are drowning in administrative work and wrestling with clunky electronic health records (EHRs). If a new AI tool adds even one more click or disrupts a familiar routine, it will be met with resistance, no matter how powerful it claims to be.

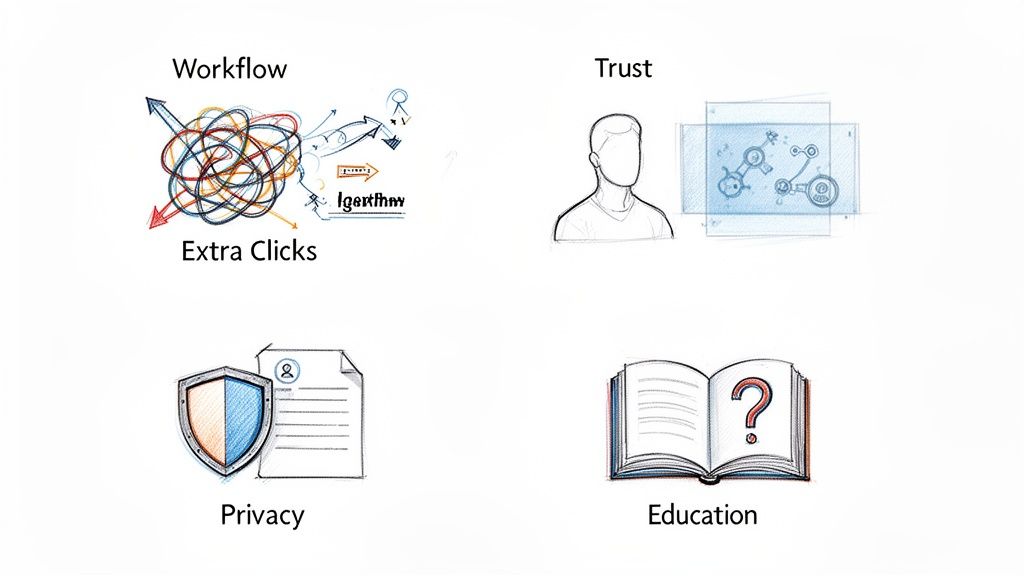

The fundamental roadblocks you’re likely hitting fall into a few key areas:

- Workflow Disruption: If an AI solution isn't seamlessly embedded into the existing workflow, it feels less like a helpful assistant and more like another box to check.

- Trust Deficits: When an AI gives a recommendation without a clear "why," it breeds skepticism. Clinicians simply won't bet a patient's health on a recommendation they can't understand.

- Data Security and Privacy: Real, valid concerns about patient confidentiality and HIPAA compliance can stop an AI project dead in its tracks before it even starts.

The first step to solving this is to stop seeing these as technical glitches and start treating them as strategic challenges. This means putting the clinician at the absolute center of the design and rollout process. This is precisely where expert guidance can make all the difference. At Ekipa AI, our Healthcare AI Services are built to bridge this exact gap, helping organizations turn interest into real-world clinical impact and ensuring technology finally starts working for clinicians, not against them.

Getting to the Heart of AI Adoption Barriers

For any new AI tool to have a fighting chance in a hospital or clinic, it has to answer one simple question from the doctor's point of view: "Does this make my life easier or harder?" All too often, technology built with the best intentions falls flat on this first, most basic test. The problem usually isn't the algorithm; it's the fundamental, human-side challenges that were overlooked.

Taking a hard look at these core clinician AI adoption challenges is the only way to build solutions that actually get used. These aren't just isolated tech glitches. They're interconnected roadblocks that demand a thoughtful, proactive game plan. If you miss even one, the whole project can grind to a halt.

The Friction of Workflow Disruption

Imagine asking a surgeon to use a new scalpel that forces them to log into a separate app, click through three screens, and then copy-paste the results back into the patient's chart. It wouldn't matter how sharp the blade is; they'd ditch it by the end of the day. This is exactly how many AI tools feel to clinicians right now.

When an AI solution doesn't plug seamlessly into the existing Electronic Health Record (EHR) and the way doctors already work, it just creates more friction. Every extra click, login, or pop-up window adds to the mental burden of a professional who is already stretched to their limit. This is a huge reason why many powerful AI tools for business simply don't translate to healthcare; the context is everything. The best solutions have to feel like a natural extension of what clinicians are already doing, not a frustrating detour.

The "Black Box" and the Trust Deficit

Trust is the bedrock of medicine. Clinicians are trained their entire careers to understand the "why" behind every decision, diagnosis, and treatment. So when an AI model spits out a recommendation without a clear, understandable reason, it creates a massive trust gap. This is the classic "black box" problem.

A revealing study by Medscape & HIMSS found that 72% of healthcare professionals point to data privacy as a major risk. This concern goes beyond just security—it gets to the heart of whether the AI's insights are reliable and transparent.

Doctors and nurses are right to be skeptical of algorithms they can't see inside. To feel confident acting on an AI's suggestion, they need to understand what data points and logic led to that conclusion. The only way to build trust is to design AI systems that are transparent and auditable, turning that black box into a glass box. Making this happen is a central part of any effective AI strategy consulting engagement.

Data Privacy and Security Anxieties

In healthcare, data isn't just information—it's someone's most personal story. The fear of data breaches, HIPAA violations, and the misuse of patient records is a huge barrier holding back AI adoption. Clinicians are the frontline guardians of this sensitive information, and they are understandably hesitant to embrace any new tech that might put it at risk.

These aren't paranoid fears; they're completely valid. Any AI tool that touches patient health information (PHI) must be built on a rock-solid foundation of security protocols and clear data governance. This is about more than just firewalls. It requires being upfront with clinicians about:

- Where data lives: Is it on a local server or in the cloud?

- How data is protected: What specific steps are taken to anonymize patient information?

- Who can see it: What are the access controls and audit trails?

Without clear, honest answers, anxiety will fill the void and adoption will stall. A proper AI requirements analysis is critical for ensuring these security measures are baked in from day one, not bolted on as an afterthought.

The Critical Education and Training Gap

Finally, even the most intuitive, trustworthy, and secure AI tool will gather dust if clinicians aren't properly trained to use it. A one-off webinar and a PDF guide just won't cut it. Real training has to go beyond the "how-to" and get to the "why"—explaining the tool's limitations, its strengths, and the specific clinical moments where it adds the most value.

This education gap is one of the most underestimated clinician AI adoption challenges. Training needs to be an ongoing process, tailored to different roles, and laser-focused on building confidence. The goal isn't just to teach people to click buttons, but to empower them to use the tool and critically evaluate its outputs. This creates informed users who see AI as a reliable partner in delivering care—a mission that requires a true partnership between technology experts and clinical leaders. Tackling these hurdles is complex, but with the right strategic partner, it's entirely achievable, which is why working with our expert team can make all the difference.

Building Trust and a Rock-Solid Governance Framework

In healthcare AI, the technology is only half the story. The real make-or-break moments happen on the human side of the equation. For a clinician, picking up a new AI tool isn’t like learning new software—it’s about trusting an algorithm with their professional judgment and, more importantly, their patients’ lives. This is why building genuine trust and creating a solid governance framework are the twin pillars of any successful adoption strategy.

Without them, even the most brilliant tech is dead on arrival. When clinicians voice concerns about data privacy or the clinical validity of an AI's output, they aren't just complaining. They're highlighting critical risks born from a deep-seated professional responsibility, and they need a structured, transparent answer from leadership.

Preventing "Shadow AI" with Clear Governance

When an organization doesn't provide clear guidelines and vetted tools, something dangerous starts to happen: "shadow AI." This is when clinicians, buried under administrative work and desperate for a lifeline, start using unapproved, consumer-grade AI tools to get the job done. This practice swings the door wide open for huge data security risks, potential HIPAA violations, and serious clinical errors.

The only way to stop this is with a strong governance framework. This isn't just an IT problem; it's a collaborative effort. Executives, IT security, and clinical teams must come together to co-design clear policies for how AI can and can't be used. This gets everyone ahead of the problem, ensuring that any AI tool used is secure, vetted, and actually fits into clinical best practices—preventing the chaos that comes from an ungoverned free-for-all.

Trust, reliability, and data privacy are the fundamental barriers holding back widespread AI adoption among clinicians. A Medscape & HIMSS report found that a staggering 72% of healthcare professionals see data privacy as a significant risk. This trust gap remains a major hurdle even as investment in healthcare AI is expected to hit $1,033.27 billion by 2034. It’s a clear signal that money alone can't buy the confidence needed for true adoption.

From Skepticism to Confidence Through Transparency

The best way to dissolve skepticism is with radical transparency. Clinicians need to see how an AI tool reaches its conclusions, not just what it concludes. This means setting up clear validation protocols that pit AI models against real-world clinical scenarios long before they go live.

This process has to feel like a partnership, not a top-down directive. When you bring clinicians into the validation and oversight process, they stop being passive users and become active stakeholders in the technology's success. Building that lasting trust also means learning from what can go wrong, which involves thoroughly analyzing AI security failures. This commitment to open, honest evaluation demystifies the "black box" and builds real confidence.

For a closer look at navigating this complex process, our guide on implementation support can help manage these crucial steps effectively.

Formalizing Your Strategy for Responsible Adoption

Turning these ideas into action requires a formal, documented strategy. This is more than just a rulebook; it’s a shared blueprint for responsible innovation that everyone in the organization can get behind. A truly comprehensive strategy should nail down a few key areas:

- Data Handling Protocols: Crystal-clear rules defining how patient data is used, stored, and protected.

- Validation Standards: A step-by-step process for testing and signing off on any new clinical AI tool.

- Oversight Committees: A cross-functional group—with clinicians, ethicists, and IT—to review AI performance and its ethical implications.

- Clinician Feedback Loops: A structured way for users on the front lines to report issues, flag concerns, and suggest improvements.

By formalizing this process, you ensure that AI is brought into your organization in a way that’s not just effective but also ethical and safe for everyone involved.

Lessons From the AI Scribe Revolution

If you want a masterclass in how to get AI adoption right, look no further than the recent explosion of AI-powered ambient scribes. This isn't ancient history; it's a powerful, real-time case study showing what happens when technology directly attacks a massive, universal pain point for doctors: documentation burnout.

The story here teaches one fundamental lesson above all others: the strongest catalyst for adoption is a genuine 'pull' from clinicians themselves. Electronic Health Records (EHRs) were often a 'push'—a top-down mandate clinicians had to endure. Scribes, on the other hand, were welcomed with open arms because they offered immediate, tangible relief from a daily grind. This is precisely why a well-thought-out AI Product Development Workflow is non-negotiable; it has to start by obsessing over the user's biggest headaches.

The Speed of Solving a Real Problem

The adoption rate for AI scribes has been stunning, especially when you contrast it with the slow, agonizing rollout of EHR systems decades ago. It just goes to show that the typical clinician AI adoption challenges seem to melt away when a tool delivers huge value with almost no friction.

A report from Bessemer Venture Partners, the State of Health AI 2026, found that a staggering 92% of provider health systems were already deploying or piloting AI scribes by early 2025. Think about that. This technology reached a market penetration in just two or three years that took EHRs more than a decade to achieve. You can get more details in Bessemer Venture Partners' full report on Health AI.

This wasn't about flashy marketing or a long list of features. The value proposition was beautifully simple: give clinicians their time back so they can focus on patients, not keyboards.

The Hidden Dangers of Ungoverned Adoption

But this runaway success story has a flip side. The demand was so intense that many organizations started seeing "shadow AI" pop up all over the place. Individual doctors and entire departments began using whatever scribe tools they could find online, often without any formal vetting from IT or leadership. This ad-hoc approach opened up serious holes in data security and raised questions about clinical accuracy.

This forced organizations into a reactive, chaotic scramble to build governance models after the fact. The AI scribe phenomenon, then, is also a cautionary tale for healthcare executives.

While solving a real problem is the ultimate catalyst for adoption, it must be paired with a proactive and strategic governance framework. Speed without structure creates risk that can undermine long-term success and trust.

This kind of reactive fire-fighting highlights just how crucial it is to have a plan from the beginning. A formal strategy, often developed with the help of AI strategy consulting, ensures that security and compliance are baked into the process from day one, not bolted on during a crisis.

Applying the Lessons to Future Innovations

The scribe saga offers a clear blueprint for any new AI project you're considering. It proves that clinicians will enthusiastically embrace technology that genuinely makes their lives easier, fits neatly into their workflow, and solves a problem they actually care about. You can see this same pattern play out across many other real-world use cases where tech tackles a core bottleneck.

So, as you think about introducing new AI tools for business into your clinical environment, ask yourself these questions, inspired by the scribe revolution:

- What's our version of "documentation burnout"? Pinpoint the single biggest point of friction your AI tool is designed to eliminate.

- How do we create that strong "pull" from our clinicians? Get them involved early in the selection and design process. Make them feel like it's their solution.

- Is our governance framework ready for success? Make sure you have the security protocols and oversight committees in place before you even launch a pilot.

By learning from the very recent past, your organization can copy the successes of the AI scribe movement while sidestepping its mistakes. A strategic approach ensures your innovation is not just fast but also safe, sustainable, and truly beneficial for everyone involved. The right partner can help you build that foresight into your plan from the get-go.

Your Strategic Roadmap to Successful AI Adoption

Knowing the challenges of AI adoption is one thing; overcoming them requires a real plan. Moving from a good idea to a tool your clinicians actually use doesn't happen by accident. It's a journey, and we can break it down into four distinct phases that take you from initial discovery all the way to widespread, sustainable use.

Think of this less as a tech deployment and more as a change management initiative. Each step is designed to build on the last, earning the trust of your clinical teams and proving that the new tool delivers real, tangible value. Following a structured roadmap like this is how you turn a promising pilot into the new standard of care, as we explored in our AI adoption guide.

Phase 1: Discovery and Strategy

Every successful AI project starts with people, not algorithms. The first phase is all about getting on the ground and deeply understanding the clinical environment. You need to find the specific, nagging pain points that AI can realistically solve. This is how you find a solution that clinicians will be asking for, rather than one you have to push on them.

The first move is a thorough needs assessment and AI requirements analysis. This means sitting down with your physicians, nurses, and other frontline staff to pinpoint where the friction is. Is it the endless documentation? The uncertainty in diagnostics? The nightmare of patient scheduling?

Key actions in this phase include:

- Hosting clinician focus groups to hear firsthand about their workflow bottlenecks.

- Analyzing operational data to find the hard numbers that back up the anecdotal evidence.

- Defining clear success metrics that go beyond just ROI to include things clinicians care about, like satisfaction and time saved.

The goal here is to walk away with a handful of high-impact, well-defined use cases. This keeps you from trying to boil the ocean and focuses your energy where it will make the biggest difference, fast. For a more structured approach, a Custom AI Strategy report can help formalize this process and pinpoint those prime opportunities.

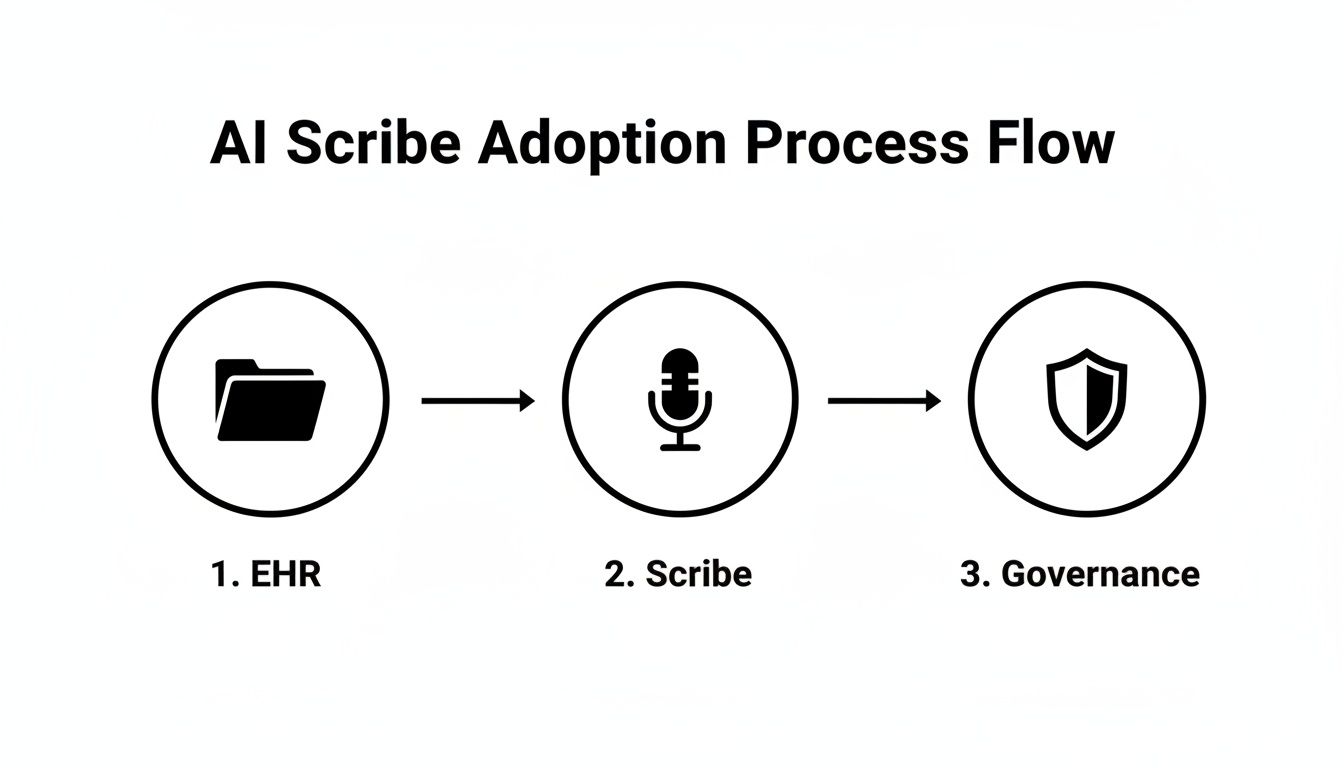

Phase 2: Governance and Planning

Once you know what you want to solve, the next step is building the framework to do it safely, ethically, and legally. This is where you establish the rules of the road. Without strong governance, you risk "shadow AI" popping up everywhere and create institutional confusion. Good governance isn’t red tape; it’s the safety net that gives everyone the confidence to move forward.

The core of this phase is creating a cross-functional AI governance committee. This isn't just an IT project. The team needs clinical leaders, IT security experts, legal and compliance officers, and an executive sponsor. Their job is to create and enforce clear rules for data security, clinical validation, and ethical oversight.

This diagram shows a simplified flow for bringing in a new tool like an AI scribe. It makes it clear that governance isn't an afterthought—it's a core part of the process.

As you can see, a formal governance structure has to run parallel to the technical work to manage risk and ensure everything is buttoned up.

Phase 3: Piloting and Validation

This is where the rubber meets the road. The pilot phase is your chance to test the AI tool in a controlled, real-world setting. You have two main goals here: first, to validate that the technology actually works as promised, and second (and just as important), to get honest feedback from the clinicians who will use it every day. A successful pilot is your single most powerful tool for getting buy-in across the organization.

Choose a small, engaged group of clinicians to be your first users. These early adopters should be a good mix of your broader user base and, crucially, be willing to tell you what they really think. During the pilot, you have to track both the numbers (like time spent on a task) and the qualitative feedback (through surveys and one-on-one chats). This is where you iron out the wrinkles, refine the workflow, and create your first group of internal champions who can vouch for the tool's value.

Phase 4: Scaling and Sustaining

With a successful pilot under your belt, it's time to go big. This final phase is less about the technology and all about managing the change. The key is to use the momentum and the stories from your pilot to drive confident, widespread use across the entire organization. This means a serious investment in training and communication.

You'll need a comprehensive training program that doesn’t just show people how to click the buttons, but explains the why behind the tool. Share the success stories from the pilot. Use testimonials from your clinical champions to build credibility with their peers. Success here isn’t just about usage numbers; it’s about shifting the culture to a place where AI is seen as a trusted and essential partner in providing great patient care.

Here’s a summary of how these phases come together in a cohesive roadmap.

Four-Step AI Adoption Roadmap for Healthcare Leaders

This table outlines the strategic journey, breaking down the key actions and goals for each phase to ensure your AI implementation is a success with clinicians.

| Phase | Key Actions | Primary Goal |

|---|---|---|

| 1. Discovery & Strategy | Conduct needs assessments, host clinician focus groups, define success metrics. | Identify high-impact use cases that solve real clinical pain points. |

| 2. Governance & Planning | Form a cross-functional governance committee, establish data and ethical protocols. | Build a safe, compliant, and trusted framework for AI implementation. |

| 3. Piloting & Validation | Run a controlled pilot with early adopters, gather quantitative and qualitative feedback. | Validate the tool’s effectiveness and create internal champions. |

| 4. Scaling & Sustaining | Develop a comprehensive training program, communicate pilot successes, monitor adoption KPIs. | Drive organization-wide adoption and embed AI as a standard of care. |

By following this structured approach, healthcare leaders can navigate the complexities of AI adoption, transforming promising technology into a valuable clinical asset.

Need an Expert Partner to Guide Your Healthcare AI Strategy?

As we've seen, overcoming the hurdles to clinician AI adoption isn't something you should tackle alone. This is about more than just plugging in new software. It’s about a fundamental shift that puts the clinician’s real-world experience at the very center of your strategy.

Success comes from making AI a seamless part of the clinical workflow, building deep-seated trust in the technology, and having a clear, proactive governance plan. This is where having a dedicated partner can be the difference between a stalled project and a successful one.

Ekipa AI was founded to help healthcare organizations navigate this exact challenge. Our expertise in AI strategy consulting is all about closing the gap between what AI promises and how it actually performs day-to-day in a clinical setting. We don’t just point you to new tech; we help you create a culture that embraces it.

Turning Skeptics into Champions

We take a hands-on, collaborative approach from the very beginning. By working directly with your clinical teams, we help turn that initial resistance into genuine advocacy. How? By ensuring every AI tool for business you introduce solves a real-world headache, not creates a new one.

We focus on securing quick, meaningful wins with high-value real-world use cases. These early successes build momentum and show everyone, from the front lines to the C-suite, the undeniable value of what you’re building.

Our process looks like this:

- We co-design solutions right alongside your clinicians, making sure the final product fits their workflow like a glove.

- We establish transparent validation and testing protocols so your team can see for themselves that the AI's insights are reliable.

- We help you develop a clear governance framework to stay ahead of risks and manage them effectively.

Our dedicated Healthcare AI Services are designed for the unique pressures and regulations of the medical world. We get it—in healthcare, technology has to support the provider-patient relationship, not get in the way of it.

Whether you need a fast-tracked Custom AI Strategy report to pinpoint your biggest opportunities or full support for custom healthcare software development, we have the strategic and technical know-how you need.

Let us help you build an AI strategy that genuinely improves care, empowers your clinicians, and delivers results that actually matter. Get in touch with our expert team today and take the first step toward making AI a sustainable success in your organization.

FAQs on Clinician AI Adoption Challenges

What is the biggest challenge in adopting AI in healthcare?

The single biggest challenge is a tie between workflow disruption and a lack of clinician trust. AI tools that aren't seamlessly integrated into existing EHRs create more work, leading to immediate rejection. Simultaneously, "black box" algorithms that don't provide clear explanations for their recommendations are met with deep skepticism by professionals trained to understand the "why" behind every decision.

How do you increase AI adoption among clinicians?

To increase adoption, focus on three core areas:

- Solve a Real Problem: Target AI solutions at major clinician pain points, like administrative burnout from documentation.

- Ensure Seamless Integration: The tool must fit naturally into existing clinical workflows without adding extra steps or friction.

- Build Trust Through Transparency: Involve clinicians in the validation process, provide clear training, and use AI models that are explainable and auditable.

What are the ethical challenges of AI in healthcare?

Key ethical challenges include ensuring patient data privacy and security (HIPAA compliance), mitigating algorithmic bias that could worsen health disparities, establishing clear accountability when an AI tool makes an error, and obtaining informed consent from patients for the use of AI in their care.

How can healthcare organizations prepare for AI integration?

Organizations can prepare by developing a comprehensive AI strategy that includes:

- Forming a Governance Committee: Create a cross-functional team of clinical, IT, and administrative leaders to oversee AI implementation.

- Starting with a Pilot: Identify a high-impact, low-risk use case to pilot the technology, prove its value, and build internal champions.

- Investing in Training: Develop an ongoing education program that goes beyond basic functions to build clinician confidence and understanding of the AI's strengths and limitations.

Getting past these hurdles isn’t about technology alone; it requires a strategic, people-focused vision. It's about finding a partner who can close the gap between AI's potential and its practical, day-to-day impact. Our specialized Healthcare AI Services are designed to guide you through this process. Connect with our expert team today and let's turn your vision into reality.