Ethical AI in Healthcare: Building Trust in Intelligent Systems

Ethical AI is essential for building trust in healthcare systems. Learn how organizations can ensure transparency, reduce bias, and create responsible AI solutions that support safe and reliable patient care.

Artificial intelligence is quietly becoming part of everyday care. Hospitals and care teams now use intelligent systems to help with diagnosis, automate tasks, and connect with patients. These tools bring speed and precision to complex work that used to rely on manual effort. Still, a question keeps coming up. Can people truly trust AI in healthcare?

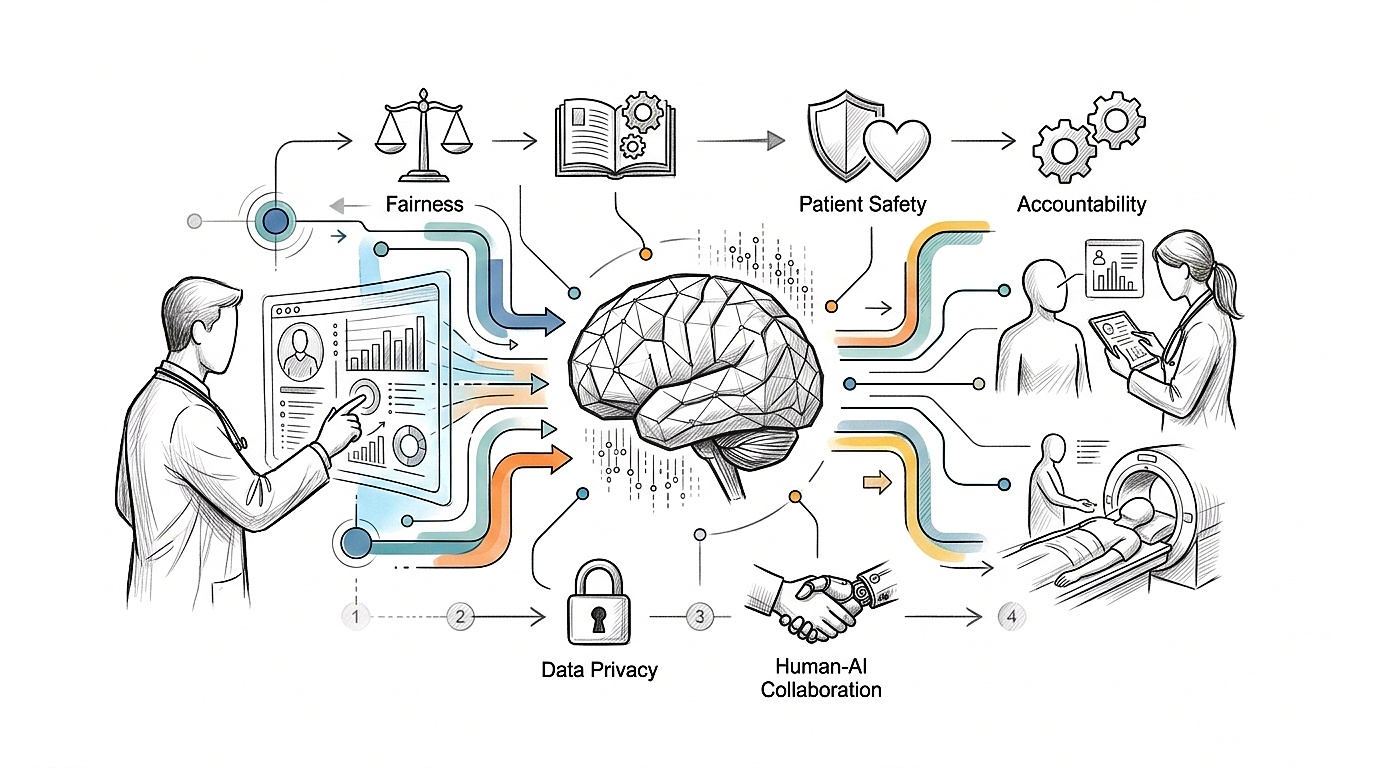

The answer depends on how carefully these systems are built and used. Ethical AI healthcare centers on fairness, openness, and patient safety. It creates the trust needed between technology, doctors, and patients. This blog explores how ethical practices guide AI in healthcare and why trust is so essential to making it work.

The Rise of AI in Healthcare

Artificial intelligence has become a regular part of how healthcare runs day to day. Doctors use AI-powered imaging tools to catch diseases earlier. Predictive systems look at patient data and offer insights that help shape treatment plans. Virtual assistants talk with patients, answer common questions, and keep communication flowing across the care journey. These advances mean faster results and fewer delays in urgent moments. They also cut down on mistakes and take some of the burden off clinical teams. Healthcare becomes more efficient through these tools, and patients see better outcomes as a result.

AI adoption is growing quickly across hospitals, research centers, and digital health platforms. That growth shows how much value AI brings to care delivery. But with that growth comes responsibility. A skilled healthtech engineering partner helps build systems that meet medical standards and patient expectations. Their work shapes how responsibly AI enters clinical settings.

Why Ethics Matters in Healthcare AI

Healthcare deals with human lives, so every decision carries weight. Ethical AI in this space focuses on using technology safely, fairly, and responsibly. It shapes how systems handle sensitive data and how their decisions affect patients.

Patient safety is always top of mind. AI tools need to deliver consistent, reliable results. Any slip can have serious consequences. Fairness is another essential piece. AI should treat every patient equally, without bias around gender, race, or background.

Accountability also matters. When AI influences care, there needs to be clarity about who is responsible and how decisions are made. Clinicians need to understand how systems work and who is responsible for the outcomes. Organizations offering healthcare AI services must ground their solutions in ethical principles to earn trust and stay credible across the healthcare system.

Key Ethical Challenges in AI Systems

Data Privacy and Security

Patient data includes sensitive details like medical history, diagnoses, and treatment records. Any misuse or breach can harm trust and put safety at risk. AI systems need large datasets to function, which raises the chance of exposure if protections are weak. Strong data governance helps reduce this risk. Encryption, controlled access, and clear data policies all play a part in keeping patient information safe. A custom AI strategy report can help organizations define secure practices and align AI efforts with privacy expectations.

Bias and Fairness

AI learns from data. If the data is not diverse, the system can produce unfair results. That can lead to unequal care for certain groups. Bias in healthcare AI creates real ethical concerns and affects patient outcomes. Balanced datasets and regular reviews help reduce this risk. Ongoing monitoring helps keep decisions fair across all patient groups. Organizations working with SaMD solutions must carefully check for bias, since these tools directly influence medical decisions and patient care.

Lack of Transparency

Many AI systems act like black boxes. They give answers without showing how they got there. That makes doctors hesitant, because they rely on clear reasoning to make decisions. Transparency builds trust. Explainable AI offers insight into how results are reached, allowing clinicians to verify and rely on the output. When professionals understand the thinking behind the results, they feel more confident using the tool. AI automation services should include explainability in their design to encourage acceptance among clinical teams.

Accountability and Responsibility

When AI helps make a decision, it needs to be clear who is responsible. Questions come up when things do not go as expected. Does responsibility fall on the developer, the clinician, or the system itself? Clear governance frameworks define roles and expectations. They guide how AI tools are used and monitored in healthcare. AI implementation support helps put these governance practices in place and ensures they line up with healthcare regulations.

The Trust Gap in Healthcare AI

AI adoption in healthcare is moving fast. Many institutions are putting money into advanced systems to improve how they work and what they achieve. But while adoption speeds up, trust lags behind.

Patients worry about privacy and accuracy. They wonder how their information is used and whether AI decisions can be trusted. Doctors also have concerns. They need clarity, control, and confidence in the tools they use. This gap between using AI and trusting it creates a real barrier. Without trust, AI cannot reach its full potential. Ethical AI healthcare helps close that gap by focusing on transparency, fairness, and accountability. An AI readiness assessment can help organizations see where they stand and what needs attention before moving forward.

How Ethical AI Builds Trust

Trust grows from practices that are consistent and open. When AI systems offer clear explanations, clinicians gain confidence in what they produce. Explainable models cut down on uncertainty and support better decisions. Protecting patient data builds trust, too. Secure systems ease concerns about how personal information is handled. Patients feel more at ease when they know their data is safe.

Fair algorithms make sure every patient is treated equally. That builds credibility and strengthens trust in AI systems. Human and AI collaboration also matters. AI helps doctors by providing insights, and doctors make the final call based on their experience and the full picture. Trust forms when AI supports, rather than replaces, the clinician. An AI adoption roadmap offers a structured way to bring AI into healthcare systems, building trust through careful, planned implementation.

Best Practices for Ethical AI Implementation

Organizations can follow a few clear practices to keep AI systems ethical. Regular assessments help uncover gaps in data, systems, and processes. Strong data governance policies protect patient information and help maintain compliance with regulations. Ongoing audits catch bias and support fairness. Continuous monitoring keeps AI outputs accurate and reliable over time. Human oversight has to stay in the loop for any decision-making. Doctors and healthcare professionals need to be involved in reviewing what AI recommends. Clear processes bring clarity and improve how systems perform. Teams working on AI use cases can keep their work grounded in ethical principles and aligned with healthcare goals.

The Future of Ethical AI in Healthcare

AI is still expanding across healthcare. New uses are emerging in diagnosis, treatment planning, and patient engagement. As adoption grows, ethical standards are getting more attention.

Regulatory frameworks are becoming clearer, helping guide how AI systems operate in healthcare. These frameworks focus on safety, openness, and accountability. Organizations that put ethics first build trust with both patients and providers. That trust strengthens their place in the healthcare system. Ethical AI healthcare will continue to shape what comes next and define how technology fits into human care.

Conclusion

Artificial intelligence brings fresh possibilities to healthcare through better speed, accuracy, and efficiency. These gains matter for patients and providers alike. But trust is what determines how far these tools will go. Ethical AI healthcare paves a way for technology to work alongside human values. Transparency, fairness, and accountability guide how systems function and influence decisions. When these principles are followed, AI can reshape healthcare in a way that is both responsible and dependable.

Contact us to explore how ethical AI practices can support healthcare innovation and build lasting trust.

Frequently Asked Questions

1. What Is Ethical AI Healthcare?

Ethical AI healthcare focuses on building AI systems that are fair, transparent, and safe, ensuring trust in clinical decisions and patient care.

2. Why Is a Healthtech Engineering Partner Important for Ethical AI?

A healthtech engineering partner helps design compliant systems, ensures responsible AI use, and provides AI implementation support aligned with healthcare standards.

3. How Do Healthcare AI Services Ensure Ethical Practices?

Healthcare AI services follow strong data governance, reduce bias, and support transparent AI use cases that improve trust and reliability.

4. How Can Organizations Prepare for Ethical AI Adoption?

They start with an AI readiness assessment and create a custom AI strategy report, followed by an AI adoption roadmap for structured and ethical implementation.

5. What Technologies Support Ethical AI in Healthcare?

AI automation services and regulated SaMD solutions help maintain transparency, improve workflows, and ensure ethical AI healthcare practices.